Building off previous robotic advancements, a team of researchers has found a way to program robots to better predict a person’s movement trajectory, enabling the integration of robots and humans in manufacturing.

Scientists from the Massachusetts Institute of Technology (MIT) have created an algorithm that accurately aligns partial trajectories in real-time, giving robots the ability to anticipate human motion.

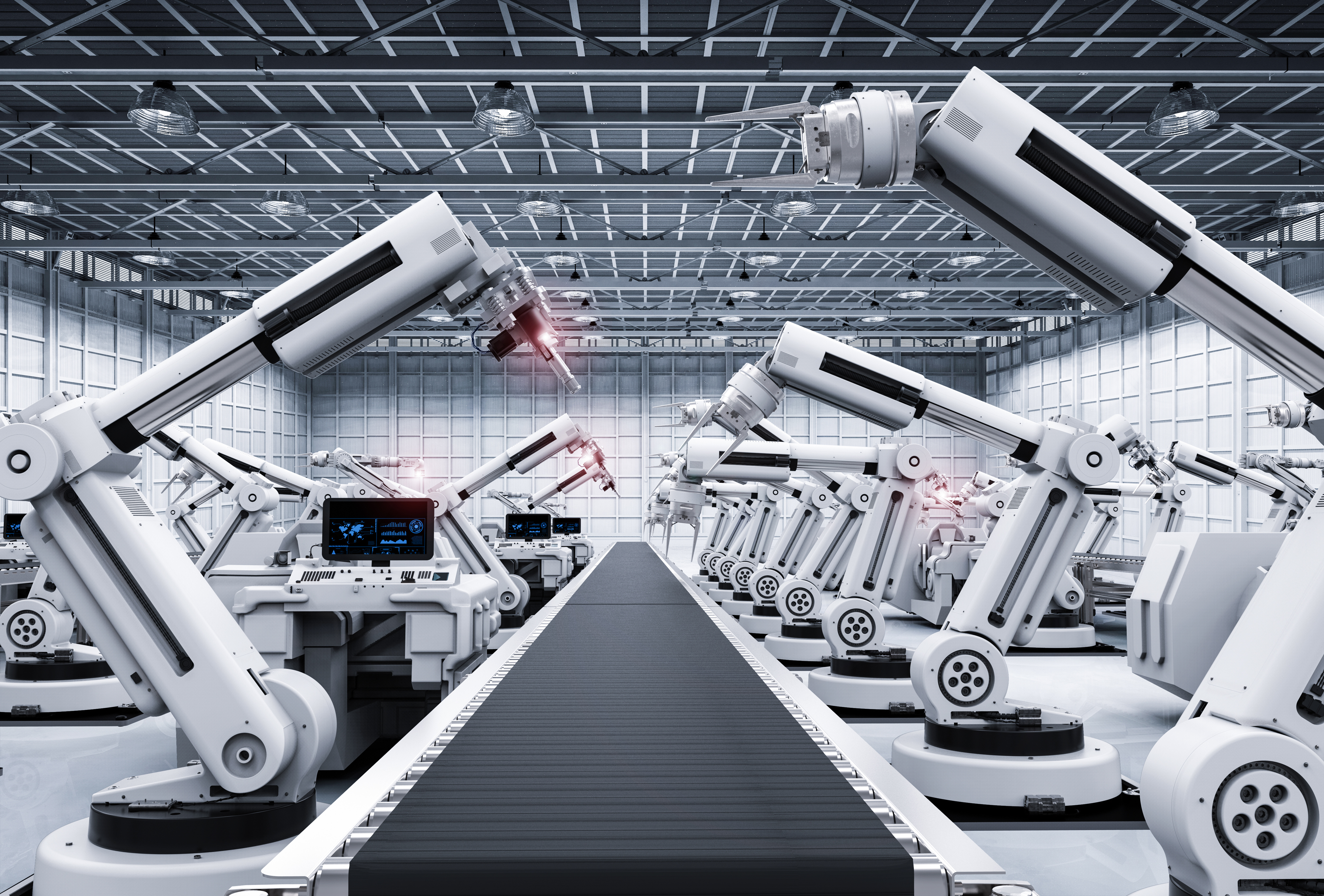

In 2018, the researchers, in a partnership with BMW, began integrating robots with a mock factory assembly line. The robots were programmed to shortly stop when crossing paths with a human, but ultimately behaved overly cautious, freezing well before a human even crossed its path.

The researchers found that this problem was caused by a limitation in the trajectory alignment algorithm that enabled the robot to predict motion, but included a poor time alignment that prevented the algorithm from anticipating how long a person would spend at any point along their predicted path.

After testing their new algorithm at the BMW factory, the robots did not freeze, but rather moved out of the way by the time a person stopped and double backed to cross the robot’s path again.

“This algorithm builds in components that help a robot understand and monitor stops and overlaps in movement, which are a core part of human motion,” Julie Shah, associate professor of aeronautics and astronautics at MIT, said in a statement. “This technique is one of the many way we’re working on robots better understanding people.”

Robots typically are programmed with algorithms used for music and speech processing to predict human movements. However, these types of algorithms are only designed to align two complete time series or sets of related data. These algorithms take in streaming motion data in the form of dots that represent the position of a person over time and compare the trajectory of those dots to a library of common trajectories within a given scenario.

This system, which is based on distance alone, easily is confused in common situations, like temporary stops.

“When you look at the data, you have a whole bunch of points clustered together when a person is stopped,” graduate student Przemyslaw Lasota said in a statement. “If you’re only looking at the distance between points as your alignment metric, that can be confusing, because they’re all close together, and you don’t have a good idea of which point you have to align to.”

Overlapping trajectories—where a person moves back and forth along a similar pass—also may not line up with a dot on a reference tractor with existing algorithms.

“You may have points close together in terms of distance, but in terms of time, a person’s position may actually be far from a reference point,” Lasota said.

The researchers overcame the limitations of existing algorithms by creating a partial trajectory algorithm that aligns segments of a person’s trajectory in real-time with a library of reference trajectories previously collected. In the new system, trajectories are aligned in both distance and timing, allowing the robot to anticipate stops and overlaps along a person’s walking path.

“Say you’ve executed this much of a motion,” Lasota said. “Old techniques will say, ‘this is the closest point on this representative trajectory for that motion.’ But since you only completed this much of it in a short amount of time, the timing part of the algorithm will say, ‘based on the timing, it’s unlikely that you’re already on your way back, because you just started your motion’.”

They further tested the algorithm against common partial trajectory alignment algorithms on a pair of human motion datasets. The first dataset involves someone intermittently crossing the path of a robot in a factory setting, while the second dataset features another group of previously recorded hand movements of participants reaching across a table to install a bolt that the robot then brushes sealant on.

In both scenarios, the new algorithm helped the robot better estimate the person’s progress through a trajectory.

The researchers then integrated the alignment algorithm with motion predictors, allowing the robot to more accurately anticipate a person’s motion timing. The robot also was less prone to freezing in a factory floor scenario.

While the aim was to integrate robots into a factory setting, the researchers believe the algorithm could be a preprocessing step for other techniques with human-robot interactions, including action recognition and gesture detection.

“This technique could apply to any environment where humans exhibit typical patterns of behavior,” Shah said. “The key is that the [robotic] system can observe patterns that occur over and over, so that it can learn something about human behavior. This is all in the vein of work of the robot better understand aspects of human motion, to be able to collaborate with us better.”

The researchers are scheduled to present the new technology at the Robotics: Science and Systems conference in Germany on June 24.