This is a game interface of the Alice Challenge. Players could manipulate three curves representing two laser beam intensities and the strength of a magnetic field gradient, respectively. The chosen curves were then realized in the laboratory in real-time.

Researchers developed a versatile remote gaming interface that allowed external experts as well as hundreds of citizen scientists all over the world through multiplayer collaboration and in real time to optimize a quantum gas experiment in a lab at Aarhus University. Surprisingly, both teams quickly used the interface to dramatically improve upon the previous best solutions established after months of careful experimental optimization. Comparing domain experts, algorithms and citizen scientists is a first step towards unravelling how humans solve complex, natural science problems.

In a future characterized by algorithms with ever increasing computational power, it becomes essential to understand the difference between human and machine intelligence. This will enable the development of hybrid-intelligence interfaces that optimally exploit the best of both worlds. By making complex research challenges available for contribution by the general public, citizen science does exactly this.

Numerous citizen science projects have shown that humans can compete with state-of-the-art algorithms in terms of solving complex, natural science problems. However, these projects have so far not addressed why a collective of citizen scientists can solve such complex problems.

An interdisciplinary team of researchers from Aarhus University, Ulm University, and the University of Sussex, Brighton have now taken important first steps in this direction by analyzing the performance and search strategy of both a state-of-the-art computer algorithm and citizen scientists in their real-time optimization of an experimental laboratory setting.

In the ‘Alice Challenge’, Robert Heck and colleagues in their quest for realizing quantum simulations enlisted the help of both experts and citizen scientists by providing live access to their ultra-cold quantum gas experiment.

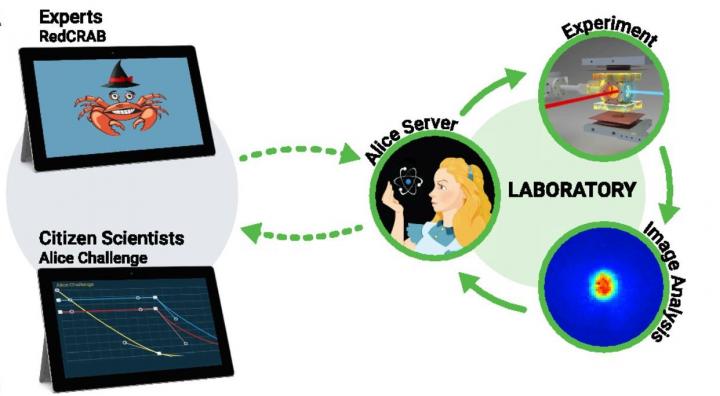

This is a remote scheme of the Alice Challenge. Parameters from the experts and citizen scientists are sent through an online cloud interface and turned into experimental sequences in real-time. After conducting the experiment, results are returned through the same cloud interface. (Credit: Robert Heck, AU)

This was made possible by using a novel remote interface created by the team at ScienceAtHome, Aarhus University. By manipulating laser beams and magnetic fields, the task was to cool as many atoms as possible down to extremely cold temperatures just above absolute zero at -273.15°C.

Surprisingly, both groups using the remote interface consistently outperformed the best solutions identified by the experimental team over months and years of careful optimization.

Why could players without any formal training in experimental physics manage to find surprisingly good solutions? One hint came from an interview with a top-player, a retired Italian microwave systems engineer. He said, that for him participating in the Alice Challenge reminded him a lot of his previous job as an engineer. He never attained a detailed understanding of microwave systems but instead spent years developing an intuition of how to optimize the performance of his “black-box”.

“We humans may develop general optimization skills in our everyday work life that we can efficiently transfer to new settings. If this is true, any research challenge can in fact be turned into a citizen science game,” said Jacob Sherson, head of the ScienceAtHome project at Aarhus University.

It still seems incredible that untrained amateurs using an unintuitive game interface outcompete expert experimentalists. One answer may lie in an old Herbert Simon quote: “Solving a problem simply means representing it so as to make the solution transparent”.

In this view, the players may be performing better not because they have superior skills, but because the interface they are using makes another kind of exploration “the obvious thing to try out” compared to the traditional experimental control interface.

“The process of developing (fun) interfaces that allow experts and citizen scientists alike to view the complex research problems from different angles, may contain the key to developing future hybrid intelligence systems in which we make optimal use of human creativity” explained Jacob Sherson.

More concretely, the team set up a carefully constructed “social science in the wild” study which allowed them to quantitatively characterize the collective search behavior of the players. They concluded that what makes human problem solving unique is how a collective of individuals balance innovative attempts and refine existing solutions based on their previous performance.