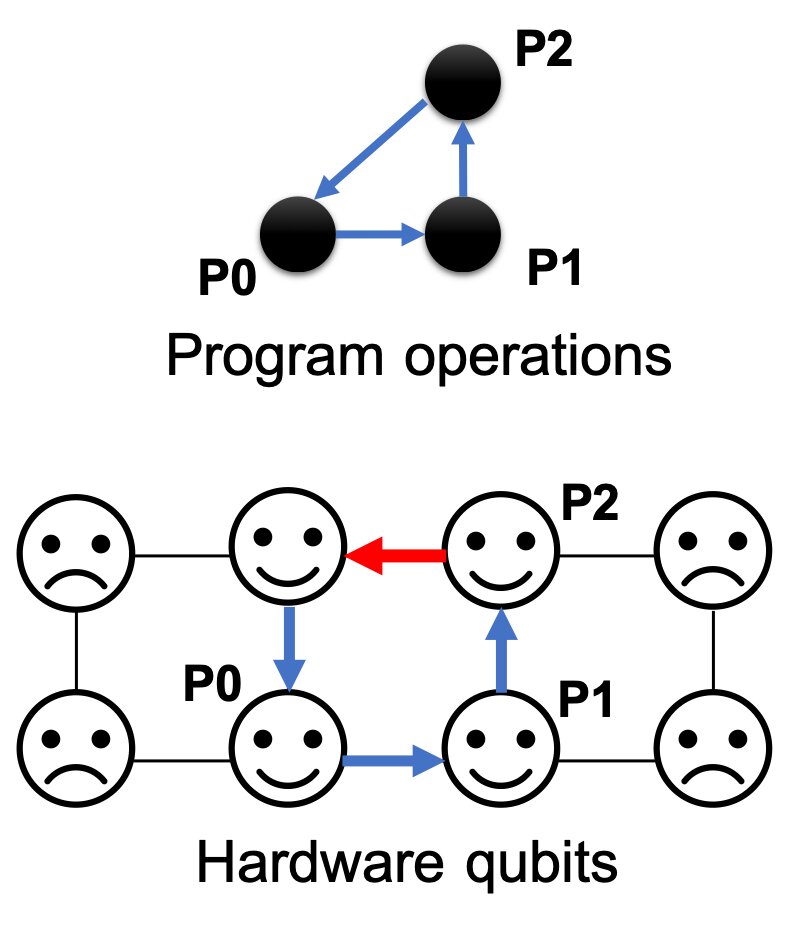

A diagram depicting the noise-adaptive compiler developed by researchers from the Enabling Practical-scale Quantum Computation collaboration and IBM. (Credit: Prakash Murali/Princeton University)

A new technique by researchers at Princeton University, University of Chicago and IBM significantly improves the reliability of quantum computers by harnessing data about the noisiness of operations on real hardware. In a paper presented this week, researchers describe a novel compilation method that boosts the ability of resource-constrained and “noisy” quantum computers to produce useful answers. Notably, the researchers demonstrated a nearly three times average improvement in reliability for real-system runs on IBM’s 16-qubit quantum computer, improving some program executions by as much as 18-fold.

The joint research group includes computer scientists and physicists from the EPiQC (Enabling Practical-scale Quantum Computation) collaboration, an NSF Expedition in Computing that kicked off in 2018. EPiQC aims to bridge the gap between theoretical quantum applications and programs to practical quantum computing architectures on near-term devices. EPiQC researchers partnered with quantum computing experts from IBM for this study, which will be presented at the 24th ACM International Conference on Architectural Support for Programming Languages and Operating Systems (ASPLOS) conference in Providence, Rhode Island on April 17.

Adapting programs to qubit noise

Quantum computers are composed of qubits (quantum bits) which are endowed with special properties from quantum mechanics. These special properties (superposition and entanglement) allow the quantum computer to represent a very large space of possibilities and comb through them for the right answer, finding solutions much faster than classical computers.

However, the quantum computers of today and the next 5-10 years are limited by noisy operations, where the quantum computing gate operations produce inaccuracies and errors. While executing a program, these errors accumulate and potentially lead to wrong answers.

To offset these errors, users run quantum programs thousands of times and select the most frequent answer as the correct answer. The frequency of this answer is called the success rate of the program. In an ideal quantum computer, this success rate would be 100 percent—every run on the hardware would produce the same answer. However, in practice, success rates are much less than 100 percent because of noisy operations.

The researchers observed that on real hardware, such as the 16-qubit IBM system, the error rates of quantum operations have very large variations across the different hardware resources (qubits/gates) in the system. These error rates can also vary from day to day. The researchers found that operation error rates can have up to nine times as much variation depending upon the time and location of the operation. When a program is run on this machine, the hardware qubits chosen for the run determine the success rate.

“If we want to run a program today, and our compiler chooses a hardware gate (operation) which has poor error rate, the program’s success rate dips dramatically,” said researcher Prakash Murali, a graduate student at Princeton University. “Instead, if we compile with awareness of this noise and run our programs using the best qubits and operations in the hardware, we can significantly boost the success rate.”

To exploit this idea of adapting program execution to hardware noise, the researchers developed a “noise-adaptive” compiler that utilizes detailed noise characterization data for the target hardware. Such noise data is routinely measured for IBM quantum systems as part of daily operation calibration and includes the error rates for each type of operation capable on the hardware. Leveraging this data, the compiler maps program qubits to hardware qubits that have low error rates and schedules gates quickly to reduce chances of state decay from decoherence. In addition, it also minimizes the number of communication operations and performs them using reliable hardware operations.

Improving the quality of runs on a real quantum system

To demonstrate the impact of this approach, the researchers compiled and executed a set of benchmark programs on the 16-qubit IBM quantum computer, comparing the success rate of their new noise-adaptive compiler to executions from IBM’s Qiskit compiler, the default compiler for this machine. Across benchmarks, they observed nearly a three-times average improvement in success rate, with up to eighteen times improvements on some programs. In several cases, IBM’s compiler produced wrong answers for the executions owing to its noise-unawareness, while the noise-adaptive compiler produced correct answers with high success rates.

Although the team’s methods were demonstrated on the 16-qubit machine, all quantum systems in the next 5-10 years are expected to have noisy operations because of difficulties in performing precise gates, defects caused by lithographic manufacturing, temperature fluctuations, and other sources. Noise-adaptivity will be crucial to harness the computational power of these systems and pave the way towards large-scale quantum computation.

“When we run large-scale programs, we want the success rates to be high to be able to distinguish the right answer from noise and also to reduce the number of repeated runs required to obtain the answer,” emphasized Murali. “Our evaluation clearly demonstrates that noise-adaptivity is crucial for achieving the full potential of quantum systems.”

The team’s full paper, “Noise-Adaptive Compiler Mappings for Noisy Intermediate-Scale Quantum Computers” is now published on arXiv and will be presented at the 24th ACM International Conference on Architectural Support for Programming Languages and Operating Systems (ASPLOS) conference in Providence, Rhode Island on April 17.