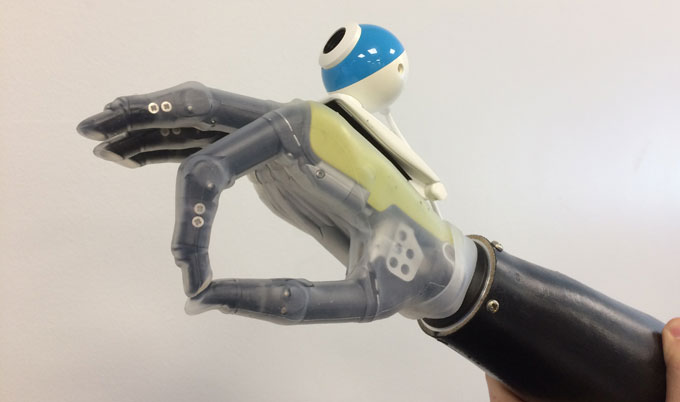

A new bionic hand is giving amputees the ability to reach objects automatically. Credit: Newcastle University

A newly created prosthetic hand gives amputees the closest thing to a real hand.

Biomedical engineers from Newcastle University in the U.K. have developed a prosthetic hand that utilizes a camera, which instantaneously takes a picture of the object in front of it and assesses its shape and size. This triggers a series of movements in the limb that allows the user to reach for it more automatically, much like a real hand.

This bypassed the usual processes that require the wearer to see the object, physically stimulate the muscles in the arm and trigger a movement in the prosthetic.

“Prosthetic limbs have changed very little in the past 100 years—the design is much better and the materials’ are lighter weight and more durable but they still work in the same way,” Kianoush Nazarpour, Ph.D., a senior lecturer in Biomedical Engineering at Newcastle University, said in a statement. “Using computer vision, we have developed a bionic hand which can respond automatically—in fact, just like a real hand, the user can reach out and pick up a cup or a biscuit with nothing more than a quick glance in the right direction.”

“Responsiveness has been one of the main barriers to artificial limbs,” Nazarpour said. “For many amputees the reference point is their healthy arm or leg so prosthetics seem slow and cumbersome in comparison.

“Now, for the first time in a century, we have developed an ‘intuitive’ hand that can react without thinking,” he added.

The limb has already tested well in trials by a small number of amputees and the researchers are working on offering “hands with eyes” to patients at Newcastle’s Freeman Hospital.

While current prosthetic hands are controlled by the electrical activity of muscles recorded from the skin surface of the stump, the researchers tapped into neural networks to create the new limb that includes for different types of grasps.

“We would show the computer a picture of, for example, a stick,” Ghazal Ghazaei, lead author of the study, said in a statement. “But not just one picture, many images of the same stick from different angles and orientations, even in different light and against different backgrounds and eventually the computer learns what grasp it needs to pick that stick up.

“So the computer isn’t just matching an image, it’s learning to recognize objects and group them according to the grasp type the hand has to perform to successfully pick it up,” she added. “It is this which enables it to accurately assess and pick up an object which it has never seen before—a huge step forward in the development of bionic limbs.”

According to recent statistics, there are approximately 600 new upper-limb amputees per year. This discovery is part of a larger initiative to develop a bionic hand that can sense pressure and temperature and transmit the information back to the brain and to develop electronic devices that connect to the forearm neural networks to allow two-way communications with the brain.

Nazarpour said the “hand that sees” limb is a bridge between current designs and future designs.

“It’s a stepping stone towards our ultimate goal,” he says. “But importantly, it’s cheap and it can be implemented soon because it doesn’t require new prosthetics—we can just adapt the ones we have.”

Doug McIntosh, a 56-year old from Scotland who lost is right arm from cancer in 1997 and was part of the case study, said the new product gives him hope.

“For me it was literally a case of life or limb,” McIntosh said in a statement. “I had developed a rare form of cancer called epithelial sarcoma, which develops in the deep tissue under the skin, and the doctors had no choice but to amputate the limb to save my life.

“Losing an arm and battling cancer with three young children was life changing. I left my job as a life support supervisor in the diving industry and spent a year fund-raising for cancer charities,” he added. “It was this and my family that motivated me and got me through the hardest times.”