Less than a year after its AI agent Carl became what the company calls the first AI system to produce peer-reviewed research, Autoscience Institute has closed a $14 million seed round to scale its ambitions. The round, led by General Catalyst, also includes Toyota Ventures, Perplexity Fund, S32 and MaC Ventures.

Less than a year after its AI agent Carl became what the company calls the first AI system to produce peer-reviewed research, Autoscience Institute has closed a $14 million seed round to scale its ambitions. The round, led by General Catalyst, also includes Toyota Ventures, Perplexity Fund, S32 and MaC Ventures.

The San Mateo-based startup plans to use the capital to deploy hundreds of automated AI research scientists. The pitch: give companies the output of a full ML research division without the headcount.

From Carl to Mira

When R&D World last covered Autoscience in April 2025, the company was focused on Carl, its autonomous research agent that had just achieved workshop acceptances at ICLR 2025. Since then, the company has notched additional milestones. Its system earned a silver medal in the Kaggle Santa 2025 competition against 3,300 teams, what Autoscience says is the first time a fully autonomous AI system has placed in a featured Kaggle competition with prize money.

“I feel really good about the systems that we’ve developed for automated research,” said founder and CEO Eliot Cowan. “It’s a very serious proof point that this stuff works.”

But the bigger strategic development is the launch of Mira, a new product with a commercial rather than academic focus. While Carl generates novel research hypotheses and writes papers, Mira is designed to take those ideas and implement them directly into customers’ production ML models.

“Once you have an AI system that can autonomously conduct research, it creates so much research that the burden is no longer coming up with good research ideas,” Cowan said. “It’s implementing those research ideas in your model.” Mira was built to close that gap, automating the full pipeline from hypothesis to deployed improvement.

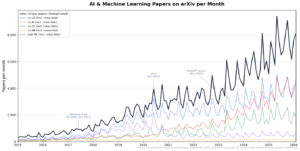

Monthly AI and machine learning paper submissions to arXiv (2015–2026). The black line shows unique papers (deduplicated by paper ID) across five AI/ML categories; colored lines show individual category counts including cross-listings: cs.LG (blue), cs.AI (red), cs.CL (green), stat.ML (purple), and cs.NE (yellow). Note the visible acceleration after ChatGPT’s November 2022 launch. Source: Cornell University arXiv Dataset (Kaggle, updated March 2026). Analysis: R&D World.

Hundreds of AI agents, working in parallel

Autoscience’s approach involves running autonomous research agents in parallel against the same problem, then selecting the best results. “We’ve found it to be incredibly useful to have 100 of these systems independently working towards the same goal, and taking the best of the results that come out,” Cowan said.

That leads to meaningfully better outcomes than just having a single attempt at the problem.

The sheer volume of agents also allows Autoscience to explore approaches human teams would never have the bandwidth to try. On the company’s Mira product page, the framing is direct: “What will you do with 100 ML researchers?” Cowan said the answer, in many cases, is exactly what a human team would want to do but can’t: run experiments across a range of architectures, including unconventional ones, and see what holds up.

The company claims its systems can incorporate the latest research into customer models far faster than human researchers typically manage. Consider the impact of a single breakthrough paper. Google’s 2017 “Attention Is All You Need” paper introduced the transformer architecture that now underpins virtually every major genAI model. Companies that moved quickly on that insight, OpenAI among them, gained years of competitive advantage. Autoscience is betting its agents can spot and implement the next equivalent breakthrough before a human team even finishes reading the abstract.

In any event, incorporating new ML research findings into workflows is not straightforward. “At some companies, it takes them months, or even more than a year, to incorporate the latest research into their models,” Cowan said. “That could be six- or seven-figure differences to their bottom line over the course of that year.”

Keeping up with 2,000 papers a week

Eliot Cowan

Autoscience’s press release says ArXiv publishes “more than 2,000 machine learning papers published every week.” cs.AI alone has roughly 3,500 to 4,000 per month.

In any case, no human team can keep pace with the current rate of ML research output. Autoscience says thousands of AI and ML papers are now published on ArXiv each week, a figure that has been growing exponentially, with research suggesting the volume doubles roughly every 23 months.

“Among AI researchers, there’s just this acceptance that you’re only going to read the papers that you hear your colleagues talking about, or that you bump into at a conference,” Cowan said. “Everyone has just sort of accepted that they’re going to miss the vast majority of the AI research that’s going on.”

Human researchers face a tradeoff: spend time reading or experimenting. “AIs don’t have to make that tradeoff,” Cowan said. Autoscience’s systems are designed to maintain full context across the entire body of published research and generate new ideas grounded in all of it, not just the fraction any individual could absorb.

The volume of output has created its own challenges, even internally. “Sometimes we produce more papers than we can even read ourselves,” Cowan acknowledged. “We might soon see research based on prior research that no human has ever read.”

Recursive self-improvement

One topic gaining more attention in recent months is that of recursive self-improvement: AI systems that improve their own training processes. This has been a goal of the company since its inception. “Our system is designed to enable recursive self-improvement of AI models,” Cowan said. “It’s certainly on our roadmap to use our system in order to improve its own training process.”

One topic gaining more attention in recent months is that of recursive self-improvement: AI systems that improve their own training processes. This has been a goal of the company since its inception. “Our system is designed to enable recursive self-improvement of AI models,” Cowan said. “It’s certainly on our roadmap to use our system in order to improve its own training process.”

In January, Anthropic CEO Dario Amodei told an audience at the World Economic Forum in Davos that “the biggest thing to watch is AI systems building AI systems.” Google DeepMind CEO Demis Hassabis endorsed the concept as well. In February, OpenAI released GPT-5.3-Codex, which it described as its first model that was “instrumental in creating itself,” with its team having used early versions to debug training runs and manage deployment.

What’s next

The funding will support Autoscience’s expansion to Fortune 500 and large private enterprise customers. The company is also hiring, with open roles for ML scientists and software engineers.

For now, Cowan is focused on a narrower ambition that could have broad consequences: building AI that is purpose-built for building AI. “The AI models that are out there today are very good generalists,” he said. “My goal is to create AI models that are specialized in creating other AI models. The tool that we’ve built is both very useful to other people who are training AI models and to us in training our own.”