On a recent trip to the R&D 100 Awards Gala in Arizona, I took my first Waymo. The car showed up by itself within minutes at the airport, whisked me to my hotel and played Spotify along the way. While a sign on the steering wheel had a note about how drivers must keep their hands on the wheel, there was no longer a driver. It was just a machine perceiving, deciding and acting in the physical world. In other words, the car was a robot, in the truest sense.

On a recent trip to the R&D 100 Awards Gala in Arizona, I took my first Waymo. The car showed up by itself within minutes at the airport, whisked me to my hotel and played Spotify along the way. While a sign on the steering wheel had a note about how drivers must keep their hands on the wheel, there was no longer a driver. It was just a machine perceiving, deciding and acting in the physical world. In other words, the car was a robot, in the truest sense.

That experience offered a concrete definition of “agentic AI” at a moment when the term risks becoming meaningless. While I’ve used agentic coding tools, something about them still feels abstract. Suggestions on a screen, code I review before it runs. Waymo dropped me at the front entrance of a hotel. That felt different.

With driverless vehicles gradually entering the mainstream in at least taxi contexts, the industry seems eager to apply “agentic” to more modest capabilities. At CES 2025, for instance, TomTom and Amazon announced an integration they describe as delivering an “agentic navigation experience.”

Interest in the term “agentic” on Google Search Trends

At CES, “agentic” often seems to mean something closer to a fluent concierge than an autonomous actor: a voice layer that can juggle intents, pull data from multiple apps, and stitch together an answer that feels coherent. TomTom’s demos with Amazon and, more recently, SoundHound AI point in that direction, combining navigation with a conversational interface that can plan multi-stop routes, surface charging options and coordinate with in-car domains like music or calendars.

The term “agentic” seemed to be everywhere at CES, applied with varying degrees of precision. Nvidia CEO Jensen Huang invoked it for Cursor, the AI coding tool, and for robotaxis, systems that reason through problems and, in some cases, physically act on the world. Samsung used it to describe a TV that recognizes who’s in the room. Lenovo announced “Qira,” a “personal AI super agent” that syncs context across devices. The common thread is more task orchestration than robotic. AI that can string together multiple actions without step-by-step instruction.

The expectations that we will have embodied agents that act in the physical world with safety constraints and real consequences are running into headwinds. Most agents today seem to execute low-state actions with confirmation. At CES 2025, Huang declared “The ChatGPT moment for general robotics is just around the corner.” This year, the language softened. The ChatGPT moment for physical AI is “nearly here.” “The challenge is clear,” he said. “The physical world is diverse and unpredictable.”

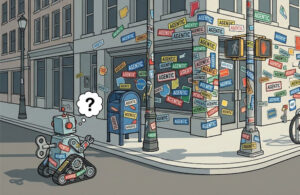

If 2025 was supposed to be the year of the agent, and by most accounts, wasn’t quite, 2026 may be the year we discover what the term actually means under pressure. The headlines from CES week tell a messier story than the keynotes. LinkedIn banned an AI agent startup, then reversed course. China opened a probe into Meta’s acquisition of agent-maker Manus. IBM’s coding agent “Bob” was easily tricked into running malware. Forbes ran a piece titled “AI Agents Fail Without Human Oversight.”

Meanwhile, a Sakana AI agent won a competitive programming contest, reportedly outperforming 804 humans. That’s closer to the Waymo end of the spectrum, a system that actually did something, autonomously, in the real world.

The word “agentic” will appear in countless press releases this year. The question is whether it will come to mean anything precise, or dissolve into the same semantic fog as “AI” or “smart” and “intelligent” before it.