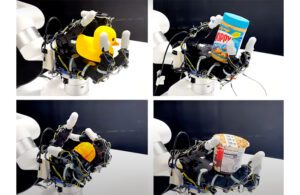

The four-fingered robotic hand has 16 touch sensors. [Image via UCSD]

Engineers at the University of California San Diego developed a new approach allowing a robotic hand to rotate objects solely through touch.

The UCSD method enables a robotic hand to do so without relying on vision. Using their technique, the engineers built a hand that can smoothly rotate a range of objects, including small toys and cans. It can rotate fruits and vegetables without squishing them as well, accomplishing its tasks using only information based on touch.

According to UCSD, the team believes the work could help develop robots that can manipulate objects in the dark.

To build the system, the team attached 16 touch sensors (which cost about $12 apiece) to the palm and fingers of a four-fingered robotic hand. These sensors detect whether an object is touching the robotic hand. The low-cost, low-resolution touch sensors use simple, binary signals across a large area of the robotic hand. UCSD says this contrasts with other approaches that rely on high-cost, high-resolution touch sensors at the fingertips.

Xiaolong Wang, a professor of electrical and computer engineering at UC San Diego, led the study. Wang says those higher-cost approaches come with a number of problems, beginning with a small number of sensors. This reduces the probability that they contact the object, limiting sensing ability. High-resolution touch sensors also provide information about texture that prove difficult and expensive to stimulate, Wang added.

Finally, unlike the UCSD method, this approach still relies on vision.

“Here, we use a very simple solution,” said Wang. “We show that we don’t need details about an object’s texture to do this task. We just need simple binary signals of whether the sensors have touched the object or not, and these are much easier to simulate and transfer to the real world.”

What sets this robotic hand apart

The engineers say a large coverage of binary touch sensors gives the robotic hand enough information about an object’s 3D structure and orientation to successfully rotate it without requiring the use of vision.

UCSD said its team trained the system by running simulations of a virtual robotic hand rotating objects, including those with irregular shapes. This system assesses which sensors touch the object during the rotation, plus the current positions of the hand’s joints and their previous actions. With this information, the system tells the robotic hand which joint needs to go where.

The researchers tested the robotic hand’s ability to manipulate objects with different shapes such as rubber ducks, peanut butter jars, toy fruit and instant oatmeal cups. [Image via UCSD]

The team tested the system on a real-life robotic hand with objects so far encountered by the system. The hand successfully rotated many objects — a tomato, pepper, a can of peanut butter and a toy rubber duck — without stalling or losing hold. Objects with more complex shapes, like the rubber duck, took longer to rotate.

Wang and the engineers aim to move the approach on to more complex manipulation tasks. That could include enabling the robotic hand to catch, throw and juggle, among other things.

“In-hand manipulation is a very common skill that we humans have, but it is very complex for robots to master,” said Wang. “If we can give robots this skill, that will open the door to the kinds of tasks they can perform.”