In early 2026, genAI still feels like alien technology: intuitive on the surface, bewildering when you dig in.

Andrej Karpathy helped build OpenAI. He led Tesla’s Autopilot Vision team. He coined the term “vibe coding.” And in late December, he posted to X that he’s never felt more behind as a programmer.

“I have a sense that I could be 10X more powerful if I just properly string together what has become available over the last ~year,” Karpathy wrote, in a post viewed 14 million times. He described current AI tools as “some powerful alien tool… handed around except it comes with no manual.”

That’s a big claim. If any professional could merely double their productivity with alien intelligence, the results would be clear. Startups in the life sciences and materials space are making similar promises: faster drug discovery cycles, accelerated materials screening, years compressed into months.

When Mike Connell, COO of Enthought, talks to R&D leaders about what they’re doing with AI, the answer is almost always the same.

“They almost always go to LLMs and generative AI. They don’t have a broader sense of it than that,” he says. “They naturally go to things like patent search or literature search, which are good applications. People are doing interesting things with it. But then they say, ‘I don’t see how to put my data in there and get anything useful out of it.'”

The struggle is fairly universal across sectors. A January 2026 TechRepublic analysis found nearly two-thirds of organizations remain stuck in “pilot purgatory,” unable to move AI projects into production. A global survey from Melbourne Business School confirmed that trust remains a critical barrier to enterprise adoption.

Are the tools are ready, and we are not? Or are we using the wrong tools? Or are we using the tools incorrectly? Yes.

Anthropic’s latest Economic Index explains part of the trust gap: prompt sophistication correlates almost perfectly (r > 0.92) with output quality. Surface prompts get surface answers. But even experts who prompt well don’t fully trust the output. Many have seen it hallucinate with confidence.

Everyone’s experimenting with AI. Almost nobody’s scaling.

Mike Connell

While Karpathy mulls the prospect of increasing productivity 10x, another OpenAI cofounder sees something different. In a November 2025 interview, former chief scientist at OpenAI Ilya Sutskever expressed genuine bewilderment at the gap between AI’s benchmark performance and its real-world impact. “This is one of the very confusing things about the models right now,” Sutskever said. “How to reconcile the fact that they are doing so well on evals?” But then you look at the real-world economic impact and it is dramatically behind, Sutskever noted. Added to that is the observation that the models, Sutskever added, “somehow just generalize dramatically worse than people. It’s super obvious.”

Hallucinations and performance degradation are still problems. For instance, Sutskever described how current models can get into dysfunctional debugging loops. He said: “You go to some place and then you get a bug. Then you tell the model, ‘Can you please fix the bug?’ And the model says, ‘Oh my God, you’re so right. I have a bug. Let me go fix that.’” And in fixing the first bug, it introduces another. “Then you tell it, ‘You have this new second bug,’ and it tells you, ‘Oh my God, how could I have done it? You’re so right again,’ and brings back the first bug, and you can alternate between those. How is that possible?” Sutskever asked. “I’m not sure, but it does suggest that something strange is going on.”

Trust in LLMs is—waning

Despite their quirks, LLMs have arrived. More people are using them every month, especially in software development. About 84% of developers now use AI, according to Stack Overflow’s 2025 survey.

But that doesn’t mean that professionals, developers or otherwise, trust the technology. A KPMG/University of Melbourne 2025 study (48,000 people, 47 countries) found that although 66% of people are already intentionally using AI with some regularity, less than half of global respondents are willing to trust it (46%). When compared to the last study of 17 countries conducted prior to the release of ChatGPT in 2022, it reveals that people have become less trusting and more worried about AI as adoption has increased.

The situation extends to science as well. “We had a case recently where a company engaged with us because one of their computational scientists was developing models for the bench scientists, and the bench scientists refused to use them,” says Connell. “They just don’t trust them.”

Superpowers without a manual

Another aspect of that trust lies in education. Using LLMs professionally isn’t necessarily straightforward given their probabilistic nature. There is a degree of unpredictability built into models whose job it is to predict the next token. Part of the art of using them is thus knowing when not to. That is, when to hand a task to a skilled human or deterministic tool that can compensate LLMs; shortcomings. Connell uses a pop culture reference point to illustrate the point: a 1980s TV show called The Greatest American Hero, about a high school teacher who receives a superhero suit from aliens but loses its instruction manual.

“The whole premise of the show is he’s got superpowers but doesn’t really know how to use them,” Connell says. “Sometimes he can turn invisible, but it’s an accident; he’s not sure how he did it. That’s what these AI things are like. It’s this incredibly powerful thing that essentially dropped from space, and we’re not so much engineering with it as trying to figure out what it does and how to use it.”

The expert knowledge gap

Part of the problem is that LLMs are trained on the internet, which means they know what’s in textbooks, not what’s in your lab.

“One of the things that’s true in the industries we work in is that scientists know more than the textbooks do,” Connell says. “That’s actually been a problem. The off-the-shelf simulator doesn’t know my physics, and I can’t add it.”

This tracks with emerging research. LLMs perform well on standardized medical exams. Med-PaLM 2 was the first to reach “expert-level” on USMLE-style questions, but structured tests don’t capture real-world complexity. Generalist models hit a ceiling when facing specialist knowledge that goes beyond their training data.

And in healthcare specifically, the pushback is growing. ECRI, an independent patient safety organization, ranked “misuse of AI chatbots in healthcare” as the #1 health technology hazard for 2026, citing cases where chatbots suggested incorrect diagnoses, recommended unnecessary testing and even invented body parts.

So if the current approach isn’t working, what does?

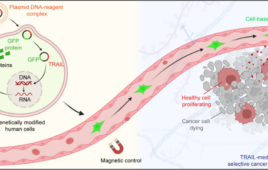

The old adage about finding the right tool for the job applies here. Instead of asking LLMs to do everything, Connell’s team builds hybrid systems that combine physics-based models with neural networks, encoding what scientists already know, then using AI to fill in the gaps.

“You stack these operators, which pull as much information out of the design space as possible, and then you use a neural network to soak up the rest,” he explains. “It reduces the requirements for data, increases the accuracy and speed, and makes the predictive model much more valid.”

The approach earns trust because scientists can see their expertise reflected in the system. The key insight is knowing what to ask AI to do, and what to enforce through other means.

Sometimes the most cogent advice for working with LLMs comes from LLMs themselves. In a recent coding session, Claude Opus 4.5 offered this guidance after watching a model repeatedly hallucinate identifiers: “Don’t ask the LLM to maintain state. Enforce it. The LLM generates creative content; code enforces constraints.”

That’s the pattern Connell is describing for the lab. Let AI do what it’s good at: pattern recognition, handling messy variance, generating hypotheses. But encode the physics you do know as constraints, not suggestions. Keep humans as the arbiters of truth.

“There’s enough information in your R&D system, as it’s traditionally constructed, to perform this computation,” Connell says. “A lot of it is in people’s heads, so the trick is getting it out and codifying it, then building it into the system.”

It’s not as flashy as a chatbot. But it actually works.

Coming next: How to build AI systems that scientists will actually trust.