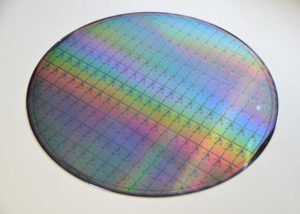

A wafer filled with memristors, Courtesy of UCL

Extremely energy-efficient artificial intelligence is now closer to reality after a study by University College London researchers found a way to improve the accuracy of a brain-inspired computing system.

The system, which uses memristors to create artificial neural networks, is at least 1,000 times more energy efficient than conventional transistor-based AI hardware, but has until now been more prone to error.

Existing AI is extremely energy-intensive – training one AI model can generate 284 tons of carbon dioxide, equivalent to the lifetime emissions of five cars. Replacing the transistors that make up all digital devices with memristors, a novel electronic device first built in 2008, could reduce this to a fraction of a ton of carbon dioxide – equivalent to emissions generated in an afternoon’s drive.

Since memristors are so much more energy-efficient than existing computing systems, they can potentially pack huge amounts of computing power into hand-held devices, removing the need to be connected to the internet.

This is especially important as over-reliance on the Internet is expected to become problematic in future due to ever-increasing data demands and the difficulties of increasing data transmission capacity past a certain point.

In the new study, published in Nature Communications, engineers at UCL found that accuracy could be greatly improved by getting memristors to work together in several sub-groups of neural networks and averaging their calculations, meaning that flaws in each of the networks could be cancelled out.

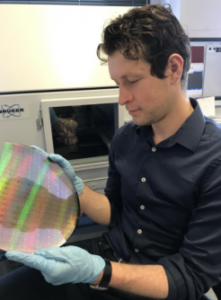

Dr. Adnan Mehonic holds a wafer filled with memristors. Courtesy of UCL

Memristors, described as “resistors with memory”, as they remember the amount of electric charge that flowed through them even after being turned off, were considered revolutionary when they were first built over a decade ago, a “missing link” in electronics to supplement the resistor, capacitor and inductor. They have since been manufactured commercially in memory devices, but the research team say they could be used to develop AI systems within the next three years.

Memristors offer vastly improved efficiency because they operate not just in a binary code of ones and zeros, but at multiple levels between zero and one at the same time, meaning more information can be packed into each bit.

Moreover, memristors are often described as a neuromorphic (brain-inspired) form of computing because, like in the brain, processing and memory are implemented in the same adaptive building blocks, in contrast to current computer systems that waste a lot of energy in data movement.

In the study, Dr Adnan Mehonic, PhD student Dovydas Joksas (both UCL Electronic & Electrical Engineering), and colleagues from the UK and the US tested the new approach in several different types of memristors and found that it improved the accuracy of all of them, regardless of material or particular memristor technology. It also worked for a number of different problems that may affect memristors’ accuracy.

Researchers found that their approach increased the accuracy of the neural networks for typical AI tasks to a comparable level to software tools run on conventional digital hardware.

“We hoped that there might be more generic approaches that improve not the device-level, but the system-level behavior, and we believe we found one. Our approach shows that, when it comes to memristors, several heads are better than one. Arranging the neural network into several smaller networks rather than one big network led to greater accuracy overall,” said Dr Mehonic, director of the study.

“We borrowed a popular technique from computer science and applied it in the context of memristors. And it worked! Using preliminary simulations, we found that even simple averaging could significantly increase the accuracy of memristive neural networks,” said Dovydas Joksas.

“We believe now is the time for memristors, on which we have been working for several years, to take a leading role in a more energy-sustainable era of IoT devices and edge computing,” said Professor Tony Kenyon (UCL Electronic & Electrical Engineering), a co-author on the study.

Tell Us What You Think!