Scientists have long been perceived and portrayed in the film as old people in white lab coats perched at a bench full of bubbling fluorescent liquids. The present-day reality is quite different. Scientists are increasingly data jockeys in hoodies sitting before monitors analyzing enormous amounts of data. Modern-day labs are more likely composed of sterile rows of robots doing the manual handling of materials, and lab notebooks are now electronic, in massive data centers holding vast quantities of information. Today, scientific input comes from data pulled from the cloud, with algorithms fueling scientific discovery the way Bunsen burners once did.

Scientists have long been perceived and portrayed in the film as old people in white lab coats perched at a bench full of bubbling fluorescent liquids. The present-day reality is quite different. Scientists are increasingly data jockeys in hoodies sitting before monitors analyzing enormous amounts of data. Modern-day labs are more likely composed of sterile rows of robots doing the manual handling of materials, and lab notebooks are now electronic, in massive data centers holding vast quantities of information. Today, scientific input comes from data pulled from the cloud, with algorithms fueling scientific discovery the way Bunsen burners once did.

Advances in technology and especially instrumentation, enable scientists to collect and process data at an unprecedented scale. As a result, scientists are now faced with massive datasets that require sophisticated analysis techniques and computational tools to extract meaningful insights. This also presents significant challenges — how do you store, manage, and share these large datasets, as well as ensure that the data is of high quality and reliable?

The impact of big data on science

This growth in data is transforming the way scientists conduct research, and it is enabling new discoveries across many fields, especially in the areas of genome and protein research. This has fostered the emergence of a whole new type of scientist whose role is as bioinformaticians and data scientists who work hands-on with big data by developing and applying algorithms. In fact, “data scientist” has been at the top of the list of desirable jobs on career sites for the last few years. However, while the demand for professionals adept in the ability to work with big data is at an all-time high, there is a significant lack of skilled talent.

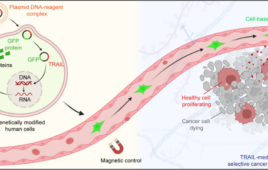

In medicine, as in other fields, it’s not just the volume and velocity of data generation that is increasing, but also the variety of data being collected to answer research questions. For instance, flow cytometry data is fundamentally different from DNA sequence data which is again totally different from 3D models of proteins. The tools and algorithms that work for one data type are not suited for another. Flexibility in data storage and modeling is crucial for repurposing data. This is especially true for predictive science where integration needs to occur between data and data types unrelated to the hypotheses of any of the original studies.

In medicine, as in other fields, it’s not just the volume and velocity of data generation that is increasing, but also the variety of data being collected to answer research questions. For instance, flow cytometry data is fundamentally different from DNA sequence data which is again totally different from 3D models of proteins. The tools and algorithms that work for one data type are not suited for another. Flexibility in data storage and modeling is crucial for repurposing data. This is especially true for predictive science where integration needs to occur between data and data types unrelated to the hypotheses of any of the original studies.

All this is now required during the development of drugs worth hundreds of millions of dollars (or more) to do things like to make predictions of new biomarkers for rare adverse events on small numbers of patient subsets.

Turning to machine learning and artificial intelligence

Technology can act like a powerful flashlight, illuminating hidden patterns and insights that exist in vast amounts of data — and allowing us to see and understand things that were previously too dark to see. That’s why despite the recent rise in genAI like ChatGPT generating a lot of headlines and stoking fear about potential risks, drug discovery is one setting where artificial intelligence (AI) and machine learning (ML) are poised to make a significant, positive impact.

For example, during the pandemic, I had the opportunity to collaborate with the team behind the EVE Online video game to create Project Discovery – Flow Cytometry, a free mini-game that enabled tens of thousands of gamers to become citizen scientists. Using data from cell samples of patients with COVID-19 and other immune system diseases, players were trained to identify different cell patterns generated using a technology known as flow cytometry. The game was incentivized with rewards and rankings to make it fun and challenging, but many players expressed the desire and satisfaction associated with participation in scientific research, especially as related to their own experience.

To date, players have solved millions of puzzles, representing hundreds of years of effort. All data from the project will be freely available for open science. Companies like Dotmatics will be able to use the data to develop ML approaches to flow cytometry data analysis, leading to exponentially faster, less expensive, and more significant medical breakthroughs.

Today, both ML and AI are being used around the world in many research labs and universities to expedite discoveries. The National Cancer Institute’s (NCI) Center for Cancer Research has developed deep-learning algorithms to improve cancer detection in people who have symptoms. For example, one model can function as “a virtual expert”, reviewing MRIs in hard-to-detect cancer types, guiding less-experienced radiologists, and minimizing error rates. Similarly, AI is used in the University of Toronto to predict Alzhiemer’s risk, by Rutgers University to predict cardiovascular disease, and by hundreds of startups using advanced technology to design cheaper, safer drugs with fewer side effects.

Complexities of big data, and making it FAIR

Despite these advances, the complexity of the data and the heterogeneity of the tools required to analyze it can make it difficult for researchers to collaborate effectively to generate the big datasets that AI requires. Efforts such as the FAIR Guiding Principles for scientific data management and stewardship provide guidelines to improve the Findability, Accessibility, Interoperability, and Reuse of digital assets. They are increasingly being adopted and are even being mandated

by granting agencies. The withholding of funding will act as a powerful motivating force in academia, but this doesn’t directly translate to pharmaceutical companies who are perhaps even more burdened by the same underlying challenges when trying to find and share massive and complex datasets internally within global organizations.

While the old way of science using beakers and chemistry is still important, tomorrow’s scientists will be able to explore and understand the world around us and scale ambitious research into areas that are presently economically prohibitive. However, to truly harness the power of AI we must invest in further improvements to the infrastructure supporting the integration, analysis, and reuse of data that have already become the new frontier of scientific discovery.