A research team led by the University of Massachusetts Amherst aims to address the massive volumes of digital information that drain energy and slow data transmission speeds with a new technology that uses old-school analog computing: an electrical component called a memristor. The name memristor is a combination of “memory” and “resistor.” A memristor is a two-terminal passive device whose resistance depends on the history of applied voltage and current. It maintains this resistance state without power, enabling non-volatile analog computing where memory and processing occur in the same physical location.

A research team has developed a brain-inspired sensing system that combines a touch sensor with a smart memory chip that only reacts when necessary to improve energy efficiency and computing speed. They published their work in Nature Sensors.

The team’s memristor-based touch sensor only processes data around pixels that contain a signal while ignoring irrelevant background noise. “When you write, it’s only a very small portion [of pixels] that are involved,” said Qiangfei Xia, the Dev and Linda Gupta professor in the Riccio College of Engineering at UMass Amherst. “You do not have to process all the information you got from the entire screen, only those pixels you’re writing on.”

Their proof-of-concept sensor system can currently recognize patterns with 87% to 92% accuracy, faster and more energy-efficiently than traditional computational methods, he said.

The technology could also be applied to event-based visual sensors like traffic cameras. “During the daytime, it’s very busy. It makes a lot of sense,” Xia said. “But at 2 a.m., there is less traffic. If you keep doing 30 frames per second, you are wasting a lot of resources.” Technology like Xia’s could save some of the electricity used to take pictures of empty highways all night.

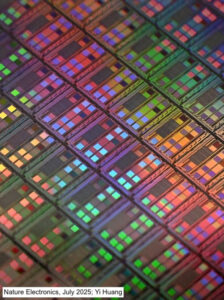

The team also published a second paper in Nature Electronics in which they demonstrated a proof-of-concept design of a memristor-based, bioinspired artificial intelligence hardware called a cellular neural network (CeNN), which was first envisioned in the 1980s. The paper is the first implementation of a memristor-based CeNN. The repeating “cells” of the hardware connect only to their nearest neighbor, not all together like the deep neural networks that make up AI. In the design, the memristor acts as the synapse between the different cells. The CeNN has simplified circuit wiring and the data transmission can be greatly reduced, saving power. This local connectivity architecture enables massively parallel processing while dramatically reducing the power-intensive data movement that bottlenecks conventional AI systems.