In this week’s R&D roundup, Lawrence Livermore National Laboratory lands a deal to construct a cutting-edge telescope for space surveillance, while researchers crack the 1918 flu virus genome. In tech, Intel mulls bowing out of the bleeding-edge chip battle as it continues to struggle to adapt in a quickly-evolving hardware landscape while NVIDIA sees a record stock valuation. On the AI front, we’re seeing a metabolic digital twin that mirrors human biology and Anthropic’s rollout of new features in its Claude Code platform that rewrite developer workflows.

Aerospace and defense technology

LLNL to build 10-Inch Space Surveillance Telescope for Firefly Elytra Mission

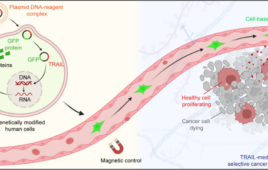

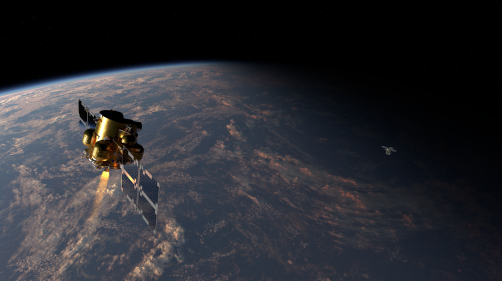

A rendering of Firefly’s Elytra Dawn vehicle, equipped with a Lawrence Livermore National Laboratory (LLNL) telescope for space domain awareness operations. Credit: Firefly Aerospace

The story: Lawrence Livermore National Laboratory has been selected by the Department of Defense’s Defense Innovation Unit to build a new monolithic telescope for a mission launching as early as 2027. The telescope will be operated onboard Firefly Aerospace’s Elytra orbital vehicle for a mission in low Earth orbit.

The numbers:

- The telescope will have a diameter of 10 inches (25 cm)

- LLNL will build the telescope and deliver it flight-ready in 13 months

- This will be LLNL’s third mission to develop space payloads for the Defense Department

Why it matters: The mission will include space domain awareness operations, which are needed for national security and identifying factors that could interfere with future space missions.

In other space news: The interstellar object, named 3I/ATLAS, that was first spotted on July 1, is the largest interstellar object ever seen. New photos from the Vera C. Rubin Observatory reveal that the object is about 7 miles (11.2 km) wide.

Health and medicine

Scientists decoded the RNA of the virus responsible for the 1918 influenza pandemic

Emergency hospital established in Zurich’s Tonhalle during the 1918 influenza pandemic. Credit: Schweizerisches Nationalmuseum, Inventarnummer LM-102737.46

The story: Scientists in Switzerland decoded the genome of the 1918 influenza virus from a preserved Zurich patient. The sample came from an 18-year-old patient who died during the first wave of the pandemic. The specimen had been preserved in a medical collection for over 100 years.

The details:

- The virus strain already carried three adaptations that made the virus more deadly to humans in the first wave

- Two adaptations made the virus more resistant to the immune system

- The third mutation improved the virus’ ability to bind to receptors on human cells

- The researchers created a new method to recover RNA fragments from ancient samples

Why it matters: Insights from viral genomes could help improve responses to future pandemics. By understanding how the viruses mutate and become resistant, scientists can build more accurate prediction models.

Watch for: The research will require more reconstructions of virus genomes and in-depth analyses over long intervals.

Semiconductors/electronics

Intel mulls canceling 14A plans and laying off tens of thousands more workers

Intel’s process roadmap highlighting current and planned semiconductor manufacturing nodes, including 14A and upcoming technologies.

Image from IntelFoundry

The story: Intel is reportedly considering slowing down or potentially dropping development plans for 14A (1.4-nm) process technology, potentially signaling a willingness to cede market share to rivals like TSMC and Samsung Foundry, which are gearing up for 2 nm mass production. “This is the first time Intel has admitted to considering withdrawing from the leading-edge semiconductor technology race for a major node,” noted Tom’s Hardware.

The details:

- Intel stated in its 10-Q filing that if it cannot secure a significant external customer for its 14A node, it may pause or discontinue development of 14A and successor nodes

- The 14A node is Intel’s first to utilize High-NA EUV lithography, with each ASML High-NA EUV tool costing around $380 million

- Intel is simultaneously laying off 15% of its workforce (around 21,000 employees) under new CEO Lip-Bu Tan

- The company posted an $821 million loss in Q1 2025 and expects its foundry business won’t break even until 14A in 2027

- Intel has already canceled its 20A node and is reportedly considering limiting 18A to internal use only

Why it matters: This would mark Intel’s exit from the leading-edge semiconductor race, ceding market dominance to TSMC and Samsung. The decision threatens Intel’s ability to compete in AI chips and advanced processors while undermining its decades-long position as a technology leader and custodian of Moore’s Law. The move could trigger massive write-offs on billions invested in development.

Watch for: Intel’s Q2 2025 earnings announcement for official guidance on 14A. Whether Intel can secure a major external customer (like Apple or Nvidia) for 14A will be critical. Final board decision expected in autumn 2025. Impact on US CHIPS Act subsidies and Intel’s Ohio fab expansion plans remains uncertain.

Technology/Markets

NVIDIA stock hits new record high as tech giants ramp up AI infrastructure spending

NVIDIA logo displayed against a backdrop of stock market charts, illustrating the company’s recent share price surge. Credit: Adobe Stock

The story: NVIDIA touched a new record high on Friday as investors anticipate massive artificial intelligence infrastructure investments from major tech companies. The chip maker’s stock reached an intraday high of $174.72, driven by Google’s increased capital expenditure guidance and expectations for similar spending from other tech giants.

The numbers:

- NVIDIA stock was up 0.5% at $174.53 in early trading, touching an intraday high of $174.72

- Google increased its 2025 capital expenditure forecast by 13% to approximately $85 billion

- Amazon expects $100 billion in capital spending for its full year

- Microsoft is on track to invest approximately $80 billion in 2025

- Meta has guided for annual capex of up to $72 billion

Why it matters: The record high reflects NVIDIA’s dominant position in the AI infrastructure market as tech giants race to build out data centers for AI workloads. Despite Google developing its own TPUs with Broadcom, executives reaffirmed their commitment to offering NVIDIA GPUs to cloud customers, validating NVIDIA’s crucial role in the AI ecosystem. The combined spending from major tech companies represents hundreds of billions in AI infrastructure investment, with NVIDIA positioned as the primary beneficiary.

Watch for: Earnings reports from Amazon, Microsoft, and Meta next week, which will provide further clarity on AI infrastructure spending. Installation ramp-up of NVIDIA’s next-generation Blackwell chips in the second half of 2025. Whether cloud providers remain capacity-constrained despite massive investments, which could drive even higher spending in 2026.

Scientific computing and big data (AI)

Scientists created the first digital twin of the human metabolism

The research team at Università Cattolica del Sacro Cuore, Rome, who developed the world’s first Quantum Metabolic Avatar—a digital twin of human metabolism powered by quantum algorithms. Credit: Università Cattolica del Sacro Cuore

The story: Physicists at the Università Cattolica del Sacro Cuore created a digital twin of the human metabolism. The avatar simulates a human metabolism using quantum algorithms and lifestyle data.

The details:

- The avatar can model various diseases, such as diabetes

- The model is powered by real data from real people, not by classical AI

- The system can make predictions up to a week in advance

- The predictions include weight, lean mass, microbiota and whether they are gaining energy or burning it

Why it matters: The model could help answer questions, such as why a diet works for one person but not another. It could enable patients to have custom interventions based on their biology.

Watch for: The researchers aim to expand the model to include more metabolic processes, and creating a generalized version that will not require historical data from the patient.

Scientists create first antimatter ‘qubit’ that could unlock universe’s biggest mystery

[Image courtesy of Adobe Stock]

The details:

- The antiproton was kept oscillating between two quantum spin states for 50 seconds – a record for antimatter

- Unlike regular qubits used in quantum computing, this antimatter qubit will test fundamental physics laws

- The BASE experiment uses sophisticated electromagnetic Penning traps to isolate antiprotons and prevent matter-antimatter annihilation

- The breakthrough enables coherent quantum transition spectroscopy for antimatter, a technique previously only possible with ordinary matter

- Future BASE-STEP system could extend coherence times up to 500 seconds by transporting antiprotons to quieter magnetic environments

Why it matters: This achievement addresses one of physics’ most profound mysteries: why the universe contains vastly more matter than antimatter when the Big Bang should have created equal amounts of both. The antimatter qubit enables 10- to 100-fold improvements in measuring differences between matter and antimatter properties. Any detected violation of charge-parity-time (CPT) symmetry – the principle that matter and antimatter behave identically – could point to new physics beyond the Standard Model and explain our matter-dominated universe.

Watch for: Results from the portable BASE-STEP system that will transport antiprotons away from CERN’s noisy environment. Precision measurements of antiproton magnetic moments that could reveal subtle matter-antimatter differences. Whether this technique can be applied to other antimatter particles like positrons or antihydrogen atoms. Potential discoveries that could finally explain the universe’s matter-antimatter asymmetry.

Here’s the updated HTML blurb without the Google redirect links:

Anthropic’s teams share Significant Gains with Claude Code, Now an Agentic Tool

Image from Anthropic

The story: Anthropic has released a detailed look at how its internal teams are using Claude Code, revealing significant productivity gains and a transformation in workflows. The case studies show both technical and non-technical staff leveraging the tool for everything from debugging complex infrastructure to automating marketing tasks, reportedly turning Claude Code into an agentic partner for a wide array of business functions.

The details:

- Widespread internal adoption: Anthropic teams including data infrastructure, product development, security, marketing, and legal have integrated Claude Code into their daily operations

- Automated workflows for non-engineers: The finance team can now execute complex data workflows by writing plain-text descriptions, and the growth marketing team built an agentic system to automate the creation of Google Ads creatives

- Accelerated development and debugging: Engineers are using Claude Code for rapid prototyping with an “auto-accept mode” and have cut incident resolution times from 15 minutes to 5 by feeding it stack traces for analysis

- Enhanced onboarding and collaboration: New hires use Claude Code to quickly understand large codebases, and product designers are directly implementing front-end changes, bridging the gap between design and engineering

- From disposable to persistent tools: The data science team is now building reusable React dashboards for model analysis instead of one-off Jupyter notebooks

- Cross-language capabilities: The inference team uses Claude Code to write logic in unfamiliar programming languages like Rust for testing purposes, eliminating the need to learn new languages for specific tasks

- Novel use cases: A member of the legal team built a custom accessibility app for a family member in under an hour, showcasing the tool’s potential for non-developers

Why it matters: This deep dive into Anthropic’s own use of Claude Code demonstrates a shift from coding assistants to more autonomous, agentic systems that can be utilized by a broader range of employees. The ability for non-technical teams to build custom tools and automations points to a significant change in how work can be accomplished within a company. The reported productivity gains and the novel applications from teams like legal and marketing highlight the potential of these tools, although some social media users have also reported misfires when such tools have wrought damage to databases or codebases.