In just a few quarters, Thermo Fisher launched the DXR3 SmartRaman+ for at-line QC and the TruScan G3 for handheld raw-material identification, Bruker acquired Tornado Spectral Systems to deepen its process Raman lineup, Endress+Hauser continued the renaming of the Kaiser Raman portfolio, Metrohm expanded its 1064 nm handheld range with the NanoRam-1064 for pharma raw-material ID, and FOSS acquired Wasatch Photonics for just under DKK 250 million. The Raman market is expanding across handheld, at-line, process, microscope, and OEM layers at the same time.

In just a few quarters, Thermo Fisher launched the DXR3 SmartRaman+ for at-line QC and the TruScan G3 for handheld raw-material identification, Bruker acquired Tornado Spectral Systems to deepen its process Raman lineup, Endress+Hauser continued the renaming of the Kaiser Raman portfolio, Metrohm expanded its 1064 nm handheld range with the NanoRam-1064 for pharma raw-material ID, and FOSS acquired Wasatch Photonics for just under DKK 250 million. The Raman market is expanding across handheld, at-line, process, microscope, and OEM layers at the same time.

That breadth creates a buying problem. Raman systems are still too often discussed as though they belong to a single product category, even when they are optimized for very different workflows. A handheld designed for rapid incoming-material checks is not really competing with a rack-mounted process analyzer feeding a control loop, or with a research microscope built for micro-defect analysis. Buyers get into trouble when they compare across classes instead of within them.

Instead of asking which Raman system to buy, buyers should first ask which class of instrument fits the job.

The Raman market doesn’t follow a single industry taxonomy, but for procurement purposes, four end-user instrument classes and one upstream OEM layer cover most of the decisions buyers actually face. Each class is optimized for a different primary workflow. A handheld designed for 30-second identity checks at a loading dock is not competing with a rack-mount process analyzer feeding a control loop, any more than a pickup truck competes with a forklift. They both move things, but the jobs are distinct.

The four classes are: handheld RMID (raw material identification), at-line benchtop QC, process Raman analyzers and Raman microscopes. A fifth category, OEM spectrometer engines and gratings, sits upstream in the supply chain. Companies like Ibsen Photonics and Wasatch Photonics supply OEM spectrometers and grating components that end up inside finished Raman instruments across all four classes. With FOSS already holding a majority stake in Ibsen and acquiring Wasatch in 2025, it now has an unusually strong position in Raman-related OEM components. That doesn’t compete directly for end-user budgets, but it shapes what’s possible in the other four.

The four-class landscape

| Class | Primary Job | Representative Products | Typical Deployment |

|---|---|---|---|

| Handheld RMID | Raw material ID and verification | TruScan G3, Agilent Vaya, Bruker BRAVO, Rigaku Progeny, Metrohm NanoRam-1064 | Receiving dock, warehouse, floor QC |

| At-Line Benchtop QC | Repeatable container-through and batch QC | DXR3 SmartRaman+ | QC lab, near production line |

| Process Analyzers | In-line / in-situ process monitoring | E+H Raman Rxn2/Rxn4, Bruker/Tornado HyperFlux PRO Plus, Process Guardian | PAT, process line, continuous manufacturing |

| Raman Microscopes | Micro-defect analysis, chemical mapping | Renishaw inVia Qontor, Horiba LabRAM Soleil, WITec alpha series | R&D labs, failure analysis |

Vendors compete hard within each class. But the boundaries get messy at specific decision points, and that’s where the costliest mistakes happen — instruments range from the low five figures for a basic handheld to several hundred thousand dollars for a fully configured confocal Raman microscope, so a class mismatch is expensive. The most common trap is in pharma, where handheld RMID and process Raman both carry the word “Raman” on the spec sheet but serve fundamentally different jobs. A handheld built for incoming-material identity testing should not be stretched into an in-line PAT role, where probes, chemometric models, software controls, and validation requirements are fundamentally different. In GMP settings, PIC/S Annex 8 allows risk-based exceptions to per-container identity testing under certain conditions — such as trusted single-source supply and regular audits — but it does not erase the distinction between warehouse ID workflows and real-time process monitoring. The reverse mistake, buying a high-end process analyzer for a loading-dock ID check, is just as costly and harder to unwind because the validation burden is already sunk.

Where the classes collide

Handheld RMID vs. at-line benchtop QC. This is the most common boundary conflict. A QC team doing identity testing may start with handhelds for speed and portability, then discover that operator variability, container curvature, or documentation requirements push them toward a fixture-based benchtop system. Thermo Fisher positions the TruScan G3 and DXR3 SmartRaman+ as complementary for exactly this reason: the G3 is built for non-technical operators to do raw material ID at the warehouse or loading dock, while the SmartRaman+ adds automated sampling with custom multi-sample holders, compressing batch release testing from weeks to hours. The gap between them is where most pharma buyers stumble, trying to make a handheld do batch-release work, or buying a benchtop when the real need is point-of-receipt screening.

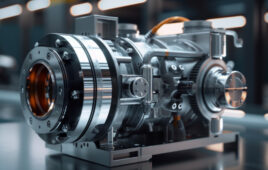

At-line benchtop QC vs. process analyzers. This boundary heats up when a team tries to move from “test near the line” to “measure in the line.” If results need to feed a control loop or trigger real-time adjustments, you’re in process Raman territory, even if the instrument started life in a QC lab. The Endress+Hauser Raman Rxn2 and Rxn4 target this space with multi-channel fiber-optic probes designed for 24/7 monitoring. On the Bruker side, the acquisition of Tornado Spectral Systems in January 2024 was explicitly about owning this boundary, Bruker bought the Canadian process Raman specialist to fill out its biopharma PAT portfolio. The resulting HyperFlux PRO Plus uses patented HTVS technology delivering up to 30x higher signal intensity than conventional slit-based spectrometers without sacrificing resolution, the kind of sensitivity gap that makes a benchtop instrument inadequate for dynamic process control.

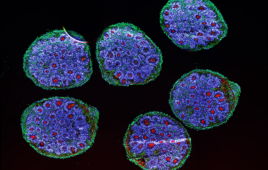

Raman microscopes vs. everything else. Microscopes compete least directly with the other classes unless a buyer tries to force one instrument to handle both routine QC and micro-scale failure analysis. The Horiba LabRAM Soleil, Renishaw inVia Qontor, and WITec alpha series are genuinely capable instruments. But Renishaw itself draws the line: it sells the inVia as a fully configurable research-grade system and the RA802 as a dedicated high-speed pharmaceutical analysis system, whereas the inVia line remains the more configurable research platform. That product split is an admission that research flexibility and QC repeatability pull in opposite directions, especially with rotating operators.

The consolidation behind the product launches

Taken together, these launches, acquisitions, and rebrands show how quickly the Raman market is changing.

Bruker + Tornado Spectral Systems (January 2024). Tornado’s patented HTVS technology claims 10–30x higher signal intensity than conventional slit-based spectrometers. Post-acquisition, Bruker now fields a three-tier process Raman lineup: SuperFlux for lab-scale work, HyperFlux PRO Plus for pilot and development, and Process Guardian for production-floor deployment. This sharpens Bruker’s challenge to Endress+Hauser in process Raman for pharma and bioprocess applications.

FOSS Group + Wasatch Photonics (November 10, 2025, just under DKK 250 million / ~$39M). This one doesn’t show up on end-user shortlists, but it matters. Wasatch supplies compact spectrometers and VPH transmission gratings used by OEMs and integrators across the Raman market. FOSS, a Danish family-owned analytical company, is assembling a photonics component portfolio under FOSS Photonics — Wasatch joins Ibsen Photonics, SPIO Systems, and Graspian ApS. FOSS now owns both Ibsen and Wasatch, giving it an unusually strong position in Raman-related OEM optics. The signal: capital is flowing into the Raman supply chain at the component level, not just at the instrument level.

Endress+Hauser / Kaiser Raman rebrand (name changes began January 2022). The hardware, technology, service, and support are unchanged. The branding, documentation, and certifications are shifting to Endress+Hauser names, and new orders may still ship with mixed old and new branding until inventory is depleted. For existing Kaiser users, this is cosmetic. For new buyers evaluating process Raman, it signals that E+H is fully committed to Raman as a core analytical modality, not a bolt-on acquisition.

The AI question Raman buyers should ask

Many Raman vendors now mention AI, machine learning, automation, or embedded chemometrics somewhere in their materials. Spectroscopy Online’s 2025 year-in-review called 2025 a turning point for AI-enabled vibrational spectroscopy, citing Transformer-based spectrum-to-structure models, ML-accelerated molecular dynamics, and emerging generalist spectral foundation models. A companion review of 2025 AI developments documented the shift across 30 publications, from agricultural sensing to precision oncology. The Horiba LabRAM Soleil emphasizes automation and software-assisted workflows. Bruker’s Tornado platform highlights automated system health monitoring and fault detection. Thermo’s TruScan line uses patented multivariate residual analysis, an embedded chemometrics engine that performs classification-style decisions on-instrument.

But AI in Raman is a capability layer, not a product class. It manifests differently by instrument type: handheld systems use embedded classification for pass/fail decisions; benchtop QC uses guided workflows and automated method development; process analyzers feed real-time chemometric models into control loops; and microscopes use ML for spectral deconvolution and automated mapping.

For buyers in regulated environments — which covers many pharma and biotech Raman deployments — the practical question is not “does it have AI?” but rather:

Where does the intelligence run? On-instrument (embedded), on-premises (server), or in the cloud? Each has different implications for validation, 21 CFR Part 11 compliance, and IT security review.

What exactly is being automated? Spectral preprocessing? Library matching? Chemometric model building? Method development? Each carries different risk profiles and requires different levels of human oversight.

How does the model transfer across instruments? A recent Spectroscopy Online analysis of ML-enabled Raman highlighted hardware stability and metadata capture as key bottlenecks limiting model portability. If your chemometric model doesn’t travel reliably between units, the AI advantage evaporates when you scale up.

Choosing within a class

Once you’ve matched your primary workflow to the right class, a handful of questions do most of the work of narrowing the shortlist.

Laser wavelength and fluorescence risk. A system’s best wavelength depends on your samples. 532 nm delivers strong Raman signal but is more likely to provoke fluorescence in real-world matrices. 785 nm is a common workhorse wavelength in pharma and many routine ID workflows. 1064 nm is the fluorescence escape hatch, at the cost of weaker signal and longer acquisition times. If fluorescence is your primary failure mode, treat wavelength as a gating requirement.

Through-container performance is a packaging problem. “Through-container” or “through-barrier” depends on material, thickness, tint, curvature, and operator positioning. Fixtures and alignment can matter as much as raw specs. Ask vendors to run your actual packaging, including worst-case lots.

Repeatability beats peak performance in QC. For QC buyers, the win condition is consistent pass/fail decisions across operators and shifts. Look for sample holders that force geometry, guided workflows that reduce judgment calls, batch modes with clean reporting, and library lock-down when needed.

Software, audit trails and validation. In regulated environments, the “instrument” includes software controls, audit trails, user permissions, and validation documentation. Ask: What audit trail is captured automatically? How are methods versioned? What does IQ/OQ support look like? How are results exported? If you’re subject to 21 CFR Part 11, these aren’t nice-to-haves — they’re gating criteria.

Connectivity and integration. For manufacturing-adjacent Raman, integration determines whether a pilot turns into routine use. Can results push into LIMS or MES without manual re-entry? What metadata travels with results? If OPC UA is offered, ask for the exact object model and a live demonstration of the data flow you will actually use.

Model and method transferability. If your workflow depends on chemometric models or ML-assisted classification, ask how those models move between instruments, sites, and operators. A recent review of ML-enabled Raman flagged hardware stability and metadata capture as key constraints. A model that works beautifully on the demo unit but won’t transfer to your production fleet is a procurement failure, not a vendor win.

Sizing up Raman platforms on a level playing field

Use the same acceptance test on every vendor in your shortlist, scored the same way. Here’s a framework that works as a template across classes, though the pass/fail thresholds and weighting should shift by class.

| # | Test | What It Reveals |

|---|---|---|

| 1 | Baseline ID set | 5 known materials including at least one near-neighbor pair, 10 repeats each. Include a blinded subset so the vendor can’t tune on the fly. Establishes spectral quality and identification reliability. |

| 2 | Hardest sample | Your most fluorescent or heterogeneous material. Exposes wavelength and sensitivity limits. |

| 3 | Real packaging | The actual container used on the floor, not a substitute. Tests through-container claims with your geometry. |

| 4 | Throughput trial | A batch size matching a real shift workload. Reveals whether quoted speed holds under sustained use. |

| 5 | Operator variability | Two operators, minimal coaching, compare variance. The test most vendors would rather skip. |

| 6 | Drift check | Run the baseline set at the start and end of the demo day. If multiple units are in play, test whether the same library or model behaves consistently across instruments. |

| 7 | Data integrity walk-through | Audit trail, permissions, library governance. Non-negotiable in regulated environments. |

| 8 | Integration proof | The exact export or connection pathway you intend to use. Catches “OPC UA available” vs. “OPC UA tested.” |

Test five, operator variability, is the one that separates instruments in practice. A handheld can win on speed at receiving. A benchtop can win on repeatability near production. A process analyzer can win when continuous monitoring is the real objective. But if two operators on the same instrument produce meaningfully different results, that practical performance gap will swamp whatever the spec sheet says.