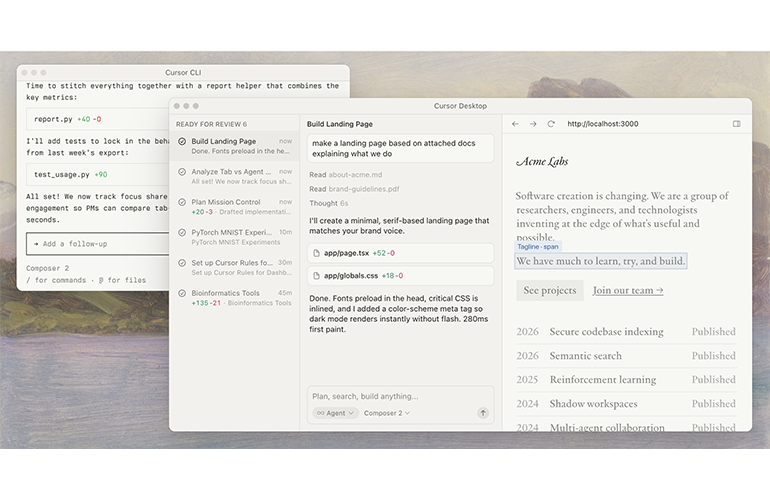

A screenshot of Cursor as

On stage at NTT Upgrade, Cursor COO Jordan Topoleski explained that a legion of AI agents built a web browser with more than one million lines of code, from a blank slate state. Kind of.

Jordan Topoleski

Referring to the project as a “really interesting experiment,” Topoleski said that the goal was to test a long running agent to “build a browser from scratch.”

Historically, building a browser, whether from scratch or not, would take a team of engineers. Netscape was built in about six months by a small team in the 1990s. In the mid-2000s, it took an initially small team about 29 months to build the first iteration of Chrome, which launched in 2008. The time horizon for Cursor’s experiment was dramatically shorter. “Over the course of six days, [it produced] like 1.3 million lines of code. And while there were a lot of bugs at the end, you could actually type in google.com, and it would render it in this browser from scratch.”

His anecdote closely matches Cursor’s FastRender project, which Wilson Lin, an engineer with an essentially unlimited token budget from Cursor, had described the project at length in a blog and on YouTube. The project was built over a few weeks, with the longest continuous agent run lasting about a week. The demo shows the browser rendering Wikipedia and CNN (albeit with some black boxes rendering where visuals should display). The “from scratch” label comes with asterisks. The codebase uses Mozilla’s Servo engine, the Taffy Rus layout library and even an optional QuickJS binding that one agent pulled in.

“The fact that a few hundred or thousand agents concurrently can actually work together and produce code that is like 99% compilable and runs and is mostly aligned to the way you instructed it was kind of an interesting result,” Wilson said in the YouTube interview. The entire 1.3 GB repo itself contains 56,711 files and 3.16 million lines across 5,732 Rust source files when including vendored third-party source, test fixtures agent scratchpad directories and generated documentation, alongside the LLM-written code the agents actually produced.

The most interesting thing about FastRender is the way the project used multiple agents working in parallel to build different parts of the browser. —Simon Willison

Agents still lack human-like agency

While directionally impressive, FastRender hasn’t earned universally glowing reviews. Gregory Terzian, a Servo maintainer, called the code “a tangle of spaghetti” and said it is “a uniquely bad design that could never support anything resembling a real-world web engine.” But he expressed support for using AI to build a web engine, albeit with substantially more human input. “You need humans in the loop at all levels of abstractions; the agents should only be used to bang out features re-using patterns established or vetted by human experts,” he added.

Software Improvement Group reached a similar conclusion after running the FastRender codebase through its Sigrid platform, scoring it 1.3 out of 5 on maintainability (bottom 5% of systems SIG benchmarks) and 2.1 out of 5 on architecture quality, with the 3.16 million lines representing roughly 110 person-years of Rust development. The analysis hailed it as “an impressive Agentic-AI experiment from Cursor in a brutally hard domain.”

FastRender, even if it is not perfect, is still an example of how far AI coding has come over the past couple of years. “We went from a place where, 12 months ago, the average enterprise customer we worked with had about 6% of the code that they pushed to production that was originally [written by AI],” Topoleski said. “Today, this number is over 60%.” Internally at Cursor, the number has climbed even higher: “We watched internally. Something like 70 to 75% of our code actually originated by AI in the last quarter.” He described the company as, in his words, “an internal tools company that uses Cursor to build Cursor.”

Where the industry ends up in the coming years is anyone’s guess, but the ramifications are likely to be significant. “The cost of creating software is increasingly going to zero,” Topoleski said.

The NTT Upgrade conversation, moderated by NTT Venture Capital founding partner Vab Goel and NTT Research CMO Chris Shaw, also circled around a sort of tension: AI is producing code at industrial volume, and organizations are still learning how to absorb that output. “This is almost a [powerful] problem,” Topoleski acknowledged, “where, as you write [more] of the code, you introduce some technical debt.”

One of the ramifications of LLMs’ predilection for generating tokens is ballooning code bases. “I was with the CIO of a very large insurance company a couple of weeks ago,” Topoleski said. “He said prior to that, we were shipping something like 150,000 lines of code per week across our whole organization. We’re about 800,000 lines of code per week, and all these other [things start] to break. Code review is breaking, our CI/CD pipelines and how we merge that into production is breaking, all these [things] start to happen.”

‘The cost of creating software is increasingly going to zero.’

The fact that AI models tend to generate code better than it can architect codebases complicates the recursion narrative. As does the fact that rivals, with expert engineers among their ranks, are also claiming they are benefitting from recursion. OpenAI has said GPT-5.3-Codex was instrumental in creating itself, while Anthropic has essentially claimed the same thing. If Cursor’s internal productivity gains depend on frontier models supplied by OpenAI, Anthropic or Google, then Cursor’s success also strengthens the economic case for the model labs’ own coding agents, which, incidentally, are getting funded through Cursor via API fees.

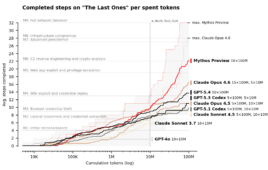

Meanwhile, frontier AI labs are showing signs of gating their most advanced models and technologies. Anthropic’s Claude Mythos Preview is not being released as an ordinary consumer or developer model. OpenAI, for its part, is expanding Trusted Access for Cyber with GPT-5.4-Cyber, a specialized GPT-5.4 variant for vetted defenders.

With reported talks for a new round at roughly $50 billion, Cursor is on track to retain its mantle as a defining software company of the AI era. But its rivals are worth substantially more. OpenAI recently announced $122 billion in committed capital at an $852 billion valuation. Anthropic, meanwhile, raised $30 billion at a $380 billion valuation, with Claude Code itself reportedly at a multibillion-dollar run-rate in February. Cursor is raising like a hypergrowth software company; its most dangerous competitors are raising and spending like infrastructure companies. Despite the competitive pressures, Google startup chief Darren Mowry recently cited Cursor as an example of the kind of AI “wrapper” company that may actually have a defensible moat. That moat shows up in its customer roster, which includes Stripe, OpenAI, Linear, Datadog, Nvidia, Figma, Ramp and Adobe.

“Despite the ‘wrapper’ label, Cursor’s defensibility stems from architectural innovations that standard IDE plugins cannot replicate. Because Cursor is a native fork of VS Code, it uses proprietary systems like a “Shadow Workspace,” a hidden background environment where the AI pre-tests and corrects its own code against linters before the user ever sees it. In addition, Cursor doesn’t rely on frontier models to actually type out the changes; it uses a custom, fine-tuned 70-billion-parameter “Fast Apply” model. In 2024, Cursor said this model weaves complex diffs into existing files at 1,000 tokens per second.

While recursion appears to be. the next focus, which AI labs benefit most from it is an open question. Anthropic recently published research in which nine Claude Opus 4.6 agents, working in parallel for five days on an alignment problem, recovered 97% of a performance gap where two human researchers working for seven days had recovered 23%. “Automating this kind of research is already practical,” the authors cost.

Tell Us What You Think!

You must be logged in to post a comment.