AI systems are playing an active role in designing experiments, screening millions of compounds and apparently generating novel mathematical proofs. Meanwhile, U.S. patent law still requires a human inventor, which precludes the potential of a solely AI inventor. The gap between those two realities is creating uncertainty for R&D organizations filing IP based on AI-assisted discovery, even as the systems themselves move closer to work once treated as exclusively human.

AI systems are playing an active role in designing experiments, screening millions of compounds and apparently generating novel mathematical proofs. Meanwhile, U.S. patent law still requires a human inventor, which precludes the potential of a solely AI inventor. The gap between those two realities is creating uncertainty for R&D organizations filing IP based on AI-assisted discovery, even as the systems themselves move closer to work once treated as exclusively human.

No one has pushed that contradiction harder than Stephen Thaler, Ph.D., whose legal campaign turned a philosophical question about machine creativity into a series of patent fights and parallel copyright litigation. The Supreme Court declined to hear the patent inventorship question in Thaler v. Vidal in 2023, and more recently declined the copyright authorship question in Thaler v. Perlmutter, leaving both rulings intact.

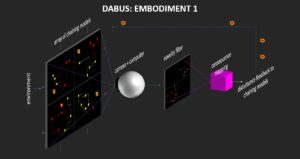

For years, the physicist and computer scientist Thaler tried to get patent offices around the world to name his AI system, DABUS, as the inventor on two patent applications. Before that campaign, Thaler built what he called the “Creativity Machine,” a neural network architecture in which one network generates novel outputs and a second evaluates them for usefulness. Thaler himself is listed as the human inventor on patents covering that system, including US 5,659,666, filed in 1994 and granted in 1997, titled “Device for the autonomous generation of useful information,” and US 7,454,388, issued in 2008, titled “Device for the autonomous bootstrapping of useful information.”

DABUS

Thaler claims that DABUS (Device for the Autonomous Bootstrapping of Unified Sentience) independently conceived at least two new products: a food container with a fractal surface that aids insulation and stacking, and a flashing light designed to attract attention in search-and-rescue emergencies. In July 2019, he filed two patent applications with the USPTO, naming DABUS as the sole inventor and arguing that he himself had not contributed to conception. He contended that listing himself as inventor would amount to a materially false statement to the government.

An electro-optical embodiment of the DABUS paradigm as shown on Imagination Engines.

The USPTO rejected the notion that AI could be an inventor. Conception, the agency wrote, is a “mental act,” the formation of a “definite and permanent idea” in the “mind” of the inventor. Machines don’t have minds, therefore they can’t conceive. The answer from nearly every other jurisdiction was the same: rejected by the European Patent Office, Australia, the UK, South Korea, Taiwan, New Zealand and Canada. South Africa granted the patent, but without substantive examination. In the U.S., the Supreme Court effectively closed the door twice: first on the patent inventorship question, declining to hear Thaler v. Vidal in April 2023, and then on the parallel copyright authorship question, declining to hear Thaler v. Perlmutter more recently. Under current U.S. law, a purely AI-generated invention cannot name an AI as inventor, and a purely AI-generated work without human authorship cannot receive copyright protection, but those rulings do not foreclose protection for AI-assisted work with sufficient human contribution.

But not every jurisdiction slammed the door the same way. Germany’s Federal Patent Court accepted a compromise, listing the inventor as “Stephen L. Thaler, Ph.D. who prompted the artificial intelligence DABUS to create the invention.” Switzerland’s Federal Administrative Court went further in June 2025, indicating that AI-assisted inventions can still proceed if a human contributor, such as someone who provided training data, shaped the AI’s processing, and recognized the output as patentable, is properly named as inventor. Collectively, such decisions indicate that the international system is working toward a framework that U.S. law just refused to build.

In an interview with R&D World, Thaler pushed back on Germany’s characterization of him as having “prompted” DABUS to create the inventions. “They didn’t ask me if that’s the correct statement to make,” he said. “DABUS is not a prompting system,” he added. His broader frustration is with a system that he believes has caught up to ideas he patented decades ago without crediting him. “It keeps repeating over and over every decade,” he said. “The new generation comes in and starts raving about something that’s already been done decades ago.”

Thaler acknowledges the structural disadvantage of working outside institutions. “I have no PR department at a university I can report the R&D to,” he told R&D World. And while the DABUS test cases were litigated pro bono through attorney Ryan Abbott’s Artificial Inventor Project, Thaler says enforcing his older Creativity Machine patents against companies he believes are using his architecture is a different fight entirely. “There is tension because a couple of companies now are utilizing, borrowing my technology, which is still valid, there are operative patents,” he said. “But I can’t afford the money to face them for 10 years to try and put an end to it.”

AI-assisted discoveries are growing more common

While Thaler was fighting in court, the industry has been filing patents on AI-assisted discoveries and simply listing the human scientists as inventors. In computer science, some companies are giving AI models unofficial credit. In the launch post for GPT-5.3-Codex, OpenAI wrote that it was its “first model that was instrumental in creating itself,” and said early versions helped debug training, manage deployment, and diagnose tests and evaluations.

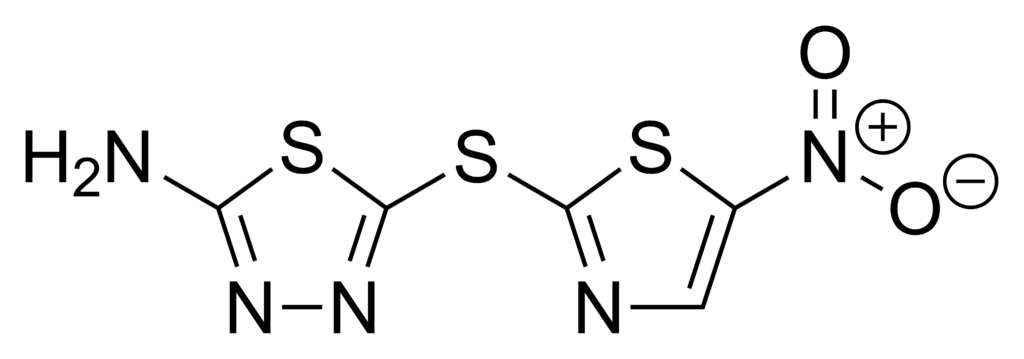

The gray zones are growing. In 2020, MIT researchers used a deep neural network to screen more than 100 million molecular compounds and identified halicin, a structurally novel antibiotic effective against drug-resistant bacteria. The compound had been developed as a potential diabetes treatment; its antibacterial properties were only discovered because AI was directed to search for them. Human scientists are listed on the resulting IP, but as University of New South Wales AI researcher Toby Walsh observed: “Halicin was originally meant to treat diabetes, but its effectiveness as an antibiotic was only discovered by AI that was directed to examine a vast catalogue of drugs that could be repurposed as antibiotics. So there’s a mixture of human and machine coming into this discovery.”

Chemical diagram for halicin via Wikimedia Commons

When the machine does the generative work

A common rebuttal to AI inventorship claims is that these systems merely recombine existing knowledge, that they can optimize within constraints but not originate ideas. That position is gradually eroding. In January 2026, public reports indicated that GPT-5.2 Pro, for instance, contributed to a disproof of Erdős Problem #397, a conjecture that had lingered unsolved for decades. The result was reportedly verified by Terence Tao and formalized in the Lean proof assistant. If the claims hold, AI is not replacing mathematicians, but it is producing novel, formally verified results that weren’t in its training data.

In the lab, claims of outright autonomous machine invention remain rare while “autonomous labs” (or self-driving labs) currently function primarily as high-level assistants rather than independent inventors or designers of experiments. But the line between human and machine involvement is growing blurrier in some cases. In February, a team from Ginkgo Bioworks and OpenAI published a preprint describing how GPT-5, connected to a robotic cloud lab in Boston, autonomously designed and executed more than 36,000 protein synthesis experiments over six months, beating the prior cost benchmark by 40%. OpenAI’s blog post made GPT-5 the subject of nearly every active verb: the system “designed” experiments, “identified” compositions, “proposed” combinations.

Human versus machine contributions

In the lab, the balance of human versus machine contributions is quickly evolving In February, a team from Ginkgo Bioworks and OpenAI published a preprint describing how GPT-5, connected to a robotic cloud lab in Boston, autonomously designed and executed more than 36,000 protein synthesis experiments over six months, beating the prior cost benchmark by 40%. Nobody filed a patent. But OpenAI’s blog post made GPT-5 the subject of nearly every active verb: the system “designed” experiments, “identified” compositions, “proposed” combinations.

Reshma Shetty, Ginkgo’s co-founder and COO, noted to R&D World that humans were involved in several phases of the research: improving reagent quality, deciding when to give the model access to a state-of-the-art preprint, making judgment calls the model couldn’t. But before GPT-5 had access to the internet or the preprint, it independently proposed the same cost-saving reagent swap that turned out to be central to the benchmark. Michael Jewett, the Stanford bioengineer whose lab published that benchmark, told Nature the resulting recipe was “broadly similar” to his own. In the abstract, that 40% cost reduction is exactly the kind of novel, improved process that could warrant a patent: a new method that demonstrably beats the state of the art. But whose method is it? GPT-5 proposed the key reagent swap before it ever saw the preprint; Jewett’s lab had already published the underlying work the benchmark was built on. The inventorship question is unavoidable: who conceived what?

The pattern extends across R&D. Stanford researchers reported a “Virtual Lab” in which LLM agents designed 92 nanobodies, including two with improved binding to recent SARS-CoV-2 variants. Microsoft’s AI screened 32 million candidates to identify a promising battery material. Insilico Medicine’s TNIK inhibitor for fibrosis, widely described as an “AI-discovered drug,” reached a phase 2a milestone in 2025. In every case, humans set the objective and judged the outputs while the machine did more of the generative work in between.