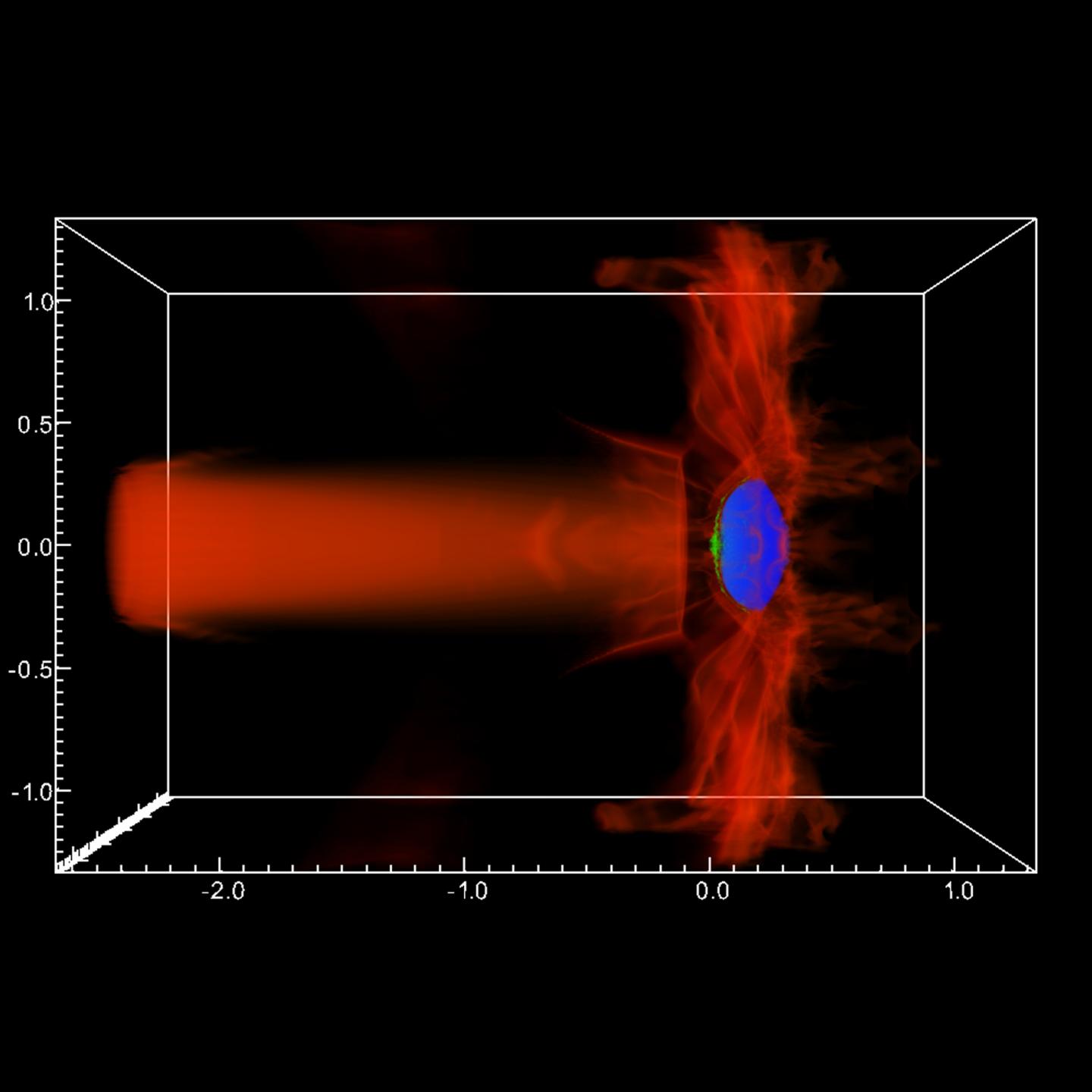

Shown here is a multi-physics simulation of an Active Galactic Nucleus (AGN) jet colliding with and triggering star formation within an intergalactic gas cloud (red indicates jet material, blue is neutral Hydrogen [H I] gas, and green is cold, molecular Hydrogen [H_2] gas. Source: Chris Fragile

Black holes make for a great space mystery. They’re so massive that nothing, not even light, can escape a black hole once it gets close enough. A great mystery for scientists is that there’s evidence of powerful jets of electrons and protons that shoot out of the top and bottom of some black holes. Yet no one knows how these jets form.

Computer code called Cosmos now fuels supercomputer simulations of black hole jets and is starting to reveal the mysteries of black holes and other space oddities.

“Cosmos, the root of the name, came from the fact that the code was originally designed to do cosmology. It’s morphed into doing a broad range of astrophysics,” explained Chris Fragile, a professor in the Physics and Astronomy Department of the College of Charleston. Fragile helped develop the Cosmos code in 2005 while working as a post-doctoral researcher at the Lawrence Livermore National Laboratory (LLNL), along with Steven Murray (LLNL) and Peter Anninos (LLNL).

Fragile pointed out that Cosmos provides astrophysicists an advantage because it has stayed at the forefront of general relativistic magnetohydrodynamics (MHD). MHD simulations, the magnetism of electrically conducting fluids such as black hole jets, add a layer of understanding but are notoriously difficult for even the fastest supercomputers.

“The other area that Cosmos has always had some advantage in as well is that it has a lot of physics packages in it,” continued Fragile. “This was Peter Anninos’ initial motivation, in that he wanted one computational tool where he could put in everything he had worked on over the years.” Fragile listed some of the packages that include chemistry, nuclear burning, Newtonian gravity, relativistic gravity, and even radiation and radiative cooling. “It’s a fairly unique combination,” Fragile said.

The current iteration of the code is CosmosDG, which utilizes discontinuous Gelarkin methods. “You take the physical domain that you want to simulate,” explained Fragile, “and you break it up into a bunch of little, tiny computational cells, or zones. You’re basically solving the equations of fluid dynamics in each of those zones.” CosmosDG has allowed much higher order of accuracy than ever before, according to results published in the Astrophysical Journal, August 2017.

“We were able to demonstrate that we achieved many orders of magnitude more accurate solutions in that same number of computational zones,” stated Fragile. “So, particularly in scenarios where you need very accurate solutions, CosmosDG may be a way to get that with less computational expense than we would have had to use with previous methods.”

XSEDE ECSS Helps Cosmos Develop

Since 2008, the Texas Advanced Computing Center (TACC) has provided computational resources for the development of the Cosmos code–about 6.5 million supercomputer core hours on the Ranger system and 3.6 million core hours on the Stampede system. XSEDE, the eXtreme Science and Engineering Discovery Environment funded by the National Science Foundation, awarded Fragile’s group with the allocation.

“I can’t praise enough how meaningful the XSEDE resources are,” Fragile said. “The science that I do wouldn’t be possible without resources like that. That’s a scale of resources that certainly a small institution like mine could never support. The fact that we have these national-level resources enables a huge amount of science that just wouldn’t get done otherwise.”

And the fact is that busy scientists can sometimes use a hand with their code. In addition to access, XSEDE also provides a pool of experts through the Extended Collaborative Support Services (ECSS) effort to help researchers take full advantage of some of the world’s most powerful supercomputers.

Fragile has recently enlisted the help of XSEDE ECSS to optimize the CosmosDG code for Stampede2, a supercomputer capable of 18 petaflops and the flagship of TACC at The University of Texas at Austin. Stampede2 features 4,200 Knights Landing (KNL) nodes and 1,736 Intel Xeon Skylake nodes.

Taking Advantage of Knights Landing and Stampede2

The manycore architecture of KNL presents new challenges for researchers trying to get the best compute performance, according to Damon McDougall, a research associate at TACC and also at the Institute for Computational Engineering and Sciences, UT Austin. Each Stampede2 KNL node has 68 cores, with four hardware threads per core. That’s a lot of moving pieces to coordinate.

“This is a computer chip that has lots of cores compared to some of the other chips one might have interacted with on other systems,” McDougall explained. “More attention needs to be paid to the design of software to run effectively on those types of chips.”

Through ECSS, McDougall has helped Fragile optimize CosmosDG for Stampede2. “We promote a certain type of parallelism, called hybrid parallelism, where you might mix Message Passing Interface (MPI) protocols, which is a way of passing messages between compute nodes, and OpenMP, which is a way of communicating on a single compute node,” McDougall said. “Mixing those two parallel paradigms is something that we encourage for these types of architectures. That’s the type of advice we can help give and help scientists to implement on Stampede2 though the ECSS program.”

“By reducing how much communication you need to do,” Fragile said, “that’s one of the ideas of where the gains are going to come from on Stampede2. But it does mean a bit of work for legacy codes like ours that were not built to use OpenMP. We’re having to retrofit our code to include some OpenMP calls. That’s one of the things Damon has been helping us try to make this transition as smoothly as possible.”

McDougall described the ECSS work so far with CosmosDG as “very nascent and ongoing,” with much initial work sleuthing memory allocation ‘hot spots’ where the code slows down.

“One of the things that Damon McDougall has really been helpful with is helping us make the codes more efficient and helping us use the XSEDE resources more efficiently so that we can do even more science with the level of resources that we’re being provided,” Fragile added.

Black Hole Wobble

Some of the science Fragile and colleagues have already done with the help of the Cosmos code has helped study accretion, the fall of molecular gases, and space debris into a black hole. Black hole accretion powers its jets. “One of the things I guess I’m most famous for is studying accretion disks where the disk is tilted,” explained Fragile.

Black holes spin. And so do the disk of gasses and debris that surrounds it and falls in. However, they spin on different axes of rotation. “We were the first people to study cases where the axis of rotation of the disk is not aligned with the axis of rotation of the black hole,” Fragile said. General relativity shows that rotating bodies can exert a torque on other rotating bodies that aren’t aligned with it.

Fragile’s simulations showed the black hole wobbles, a movement called precession, from the torque of the spinning accretion disk. “The really interesting thing is that over the last five years or so, observers–the people who actually use telescopes to study black hole systems–have seen evidence that the disks might actually be doing this precession that we first showed in our simulations,” Fragile said.

Fragile and colleagues use the Cosmos code to study other space oddities such as tidal disruption events, which happen when a molecular cloud or star passes close enough that a black hole shreds it. Other examples include Minkowski’s Object, where Cosmos simulations support observations that a black hole jet collides with a molecular cloud to trigger star formation.

Golden Age of Astronomy and Computing

“We’re living in a golden age of astronomy,” Fragile said, referring to the wealth of knowledge generated from space telescopes like Hubble to the upcoming James Webb Space Telescope, to land-based telescopes such as Keck, and more.

Computing has helped support the success of astronomy, Fragile said. “What we do in modern-day astronomy couldn’t be done without computers,” he concluded. “The simulations that I do are two-fold. They’re to help us better understand the complex physics behind astrophysical phenomena. But they’re also to help us interpret and predict observations that either have been, can be, or will be made in astronomy.”